IBM has announced the industry’s first published reference architecture for quantum-centric supercomputing (QCSC), offering a technical blueprint for combining quantum processing units (QPUs) with traditional HPC infrastructure.

The framework focuses on computational problems that exceed traditional computing capabilities, particularly in molecular simulations and quantum chemistry calculations, where quantum mechanics governs system behavior.

Key elements of the announcement include:

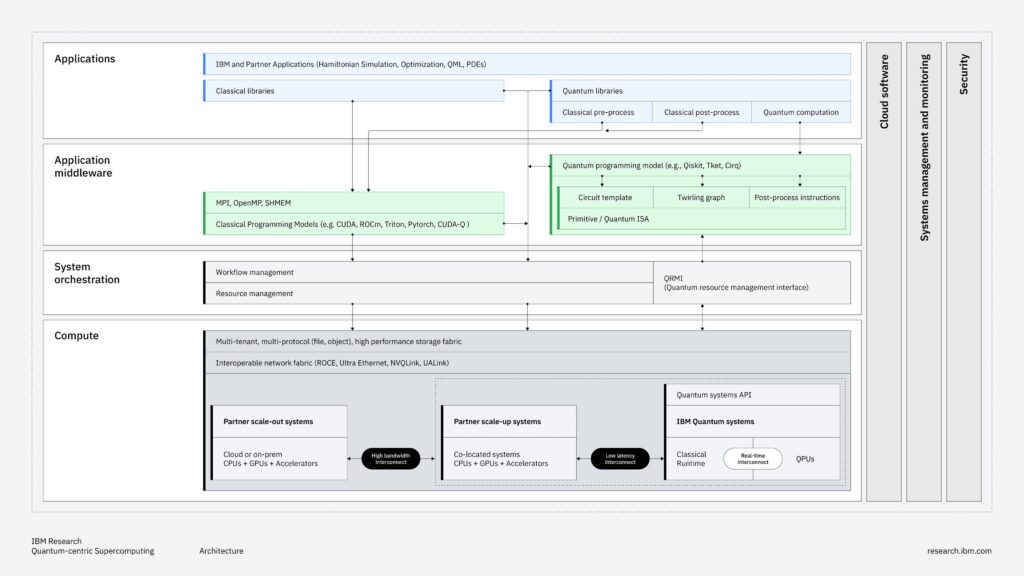

- A four-layer architecture stack comprising hardware infrastructure, system orchestration, application middleware, and applications.

- A three-phase implementation roadmap spanning several years.

- Early validation results from partner institutions, including RIKEN, Cleveland Clinic, and academic research consortia.

The reference architecture aligns with IBM’s broader quantum roadmap and highlights the increasing recognition that practical quantum computing will develop through hybrid classical-quantum workflows rather than standalone quantum systems.

Why This is Important

Current quantum computing systems operate separately from traditional HPC environments. When researchers and computational scientists try to run hybrid quantum-classical workloads (the only practical approach for near-term quantum utility), they encounter a fragmented operational landscape. Users must manually manage workloads across different systems, coordinate job scheduling between incompatible resource managers, and create custom data transfer pipelines between quantum processors and classical compute clusters.

It’s a critical obstacle that significantly hampers rapid algorithmic exploration. It also blocks the adoption of quantum computing within existing HPC workflows. The lack of standardized integration patterns requires each institution to create custom solutions, wasting effort and slowing knowledge sharing across the quantum computing community.

IBM’s reference architecture addresses the needs of several stakeholder groups:

- Computational scientists in chemistry, materials science, and molecular simulation will discover integration patterns for embedding quantum subroutines within larger classical workflows.

- HPC system administrators and architects gain a framework for integrating quantum resources into existing infrastructure without completely replacing scheduling, monitoring, and security systems.

- Quantum algorithm researchers benefit from clearly defined interfaces that specify where quantum and classical responsibilities split.

- Technology strategists at research institutions, national laboratories, and enterprises with active HPC investments can use the architecture as a framework to evaluate quantum capability roadmaps.

Let’s delve into IBM’s announcement.

Technical Details

IBM’s QCSC reference architecture outlines a complete system stack for integrating quantum and classical systems, organized into four horizontal layers with three cross-cutting concerns. At its base, the framework acknowledges that quantum processors alone cannot solve practically useful problems and instead need tight integration with classical computing resources for pre-processing, post-processing, error mitigation, and workflow orchestration.

Hardware Infrastructure Layer

The bottommost layer outlines three distinct classical hardware levels, each with different computing capabilities, proximity requirements, and connection relationships to QPUs. The quantum system itself comprises a classical runtime and one or more QPUs connected via real-time interconnects. This innermost level features diverse classical processors (FPGAs, custom ASICs, and CPUs) that must respond within qubit coherence times (measured in microseconds) for tasks such as quantum error correction decoding, mid-circuit measurements, and active qubit reset.

The specification specifies partner-scale-up co-located systems (e.g., traditional CPU and GPU systems) that are physically close to quantum systems and connected through low-latency links such as RoCE, Ultra Ethernet, or NVIDIA’s NVQLink. This constitutes the “near-time” interconnect tier.

Beyond this is the scale-out tier, which consists of distributed CPU and GPU clusters that could be on-premises or cloud-connected. These handle larger classical workloads, including tensor network contractions, large-scale diagonalization, and workflow management.

System Orchestration Layer

The System Orchestration Layer addresses resource allocation and workflow coordination across heterogeneous quantum-classical resources.

IBM introduces the Quantum Resource Management Interface (QRMI), a lightweight library that makes quantum resources available to existing HPC resource managers like Slurm. QRMI offers APIs for resource allocation, job scheduling, and job management without needing changes to Slurm’s core scheduler.

The current implementation uses a SPANK (Slurm Plug-in Architecture for Node and job Kontrol) plugin that exposes QPUs as generic resources.

Application Middleware Layer

The framework introduces Tensor Compute Graphs (TCG) as a unifying execution model for hybrid quantum-classical workflows. In a TCG, nodes represent operations that input and output tensors, while edges connect outputs to inputs.

Quantum circuits become nodes within this broader graph, alongside classical subroutines for error mitigation, post-processing, and iterative parameter optimization.

This abstraction enables quantum and classical programming models to coexist and allows for the development of complex hybrid algorithms, including those with multiple feedback loops functioning at different timescales.

Applications Layer

The top layer includes specialized libraries and solvers that utilize quantum embedding techniques. These libraries convert domain problems (such as chemistry Hamiltonians and optimization graphs) into mixed formats of tensors and quantum circuits, manage application-specific circuit synthesis, and apply post-processing algorithms like Sample-based Quantum Diagonalization (SQD) to derive meaningful results from quantum measurements.

Integration Patterns & Coupling Requirements

As part of its announcement, IBM identified five distinct use case patterns that drive architectural requirements, differentiated by temporal coupling (how tightly synchronized quantum and classical operations must be) and spatial coupling (physical proximity and interconnect bandwidth requirements):

- Batch-time integration with loose coupling: Standard SQD workflows where quantum and classical jobs operate independently. Quantum systems produce bitstring samples representing electronic configurations, and then classical HPC handles configuration recovery, subsampling, and diagonalization. This pattern tolerates queue delays and typical network latency, allowing systems to be co-located or distributed across cloud connections.

- Batch-time integration with tight spatial coupling: Closed-loop workflows where outputs from one system inform parameter optimization on the other. This pattern benefits from co-location under a single organizational control for prioritizing coupled jobs.

- Error mitigation workflows: Tensor-network error mitigation and Pauli propagation methods require substantial CPU/GPU compute, high-throughput data movement, and rapid orchestration between quantum execution and classical analysis. As circuits scale, classical computational requirements for error mitigation can rival or exceed quantum execution costs.

- Open-loop error correction research: High-bandwidth communication for decoder development and evaluation without strict real-time feedback requirements. Syndrome streams pass through high-bandwidth links to scale-out GPUs, TPUs, or CPUs for offline decoding research.

- Hierarchical error correction: Future fault-tolerant workflows with inner codes operating at hardware timescales (microsecond syndrome measurements) and outer codes functioning at 100μs to 1ms timescales. This pattern requires low-latency, scalable classical systems for outer-code processing, while inner codes remain integrated within quantum-system control electronics.

Key Algorithms and Methods

The architecture centers on several quantum-classical algorithms that demonstrate practical utility for chemistry and materials science problems, according to IBM and its research partners:

- Sample-based Quantum Diagonalization (SQD): A hybrid method in which quantum processors generate bitstring samples representing electronic configurations (Slater determinants), and classical HPC then performs configuration recovery, subsampling, and subspace diagonalization. The classical post-processing runs in a self-consistent loop until convergence. Researchers have applied this approach to molecular systems, including N₂, [2Fe-2S], and [4Fe-4S] clusters, using circuits up to 77 qubits and 10,570 gates.

- Sample-based Krylov Quantum Diagonalization (SKQD): An extension that generates samples from structured quantum circuits defining subspaces better suited for ground-state estimation.

- SqDRIFT: A variant used to model the physics of a newly synthesized “half-Möbius” molecule (a ring of carbon atoms with a half-twist in its electronic structure) in collaboration with researchers from Oxford, University of Manchester, ETH Zurich, EPFL, and University of Regensburg.

- Tensor-Network Error Mitigation (TEM): TEM uses tensor-network calculations to effectively invert the effects of noise in quantum circuits. The technique maps ideal observable estimation onto modified observable measurement on noisy circuits, employing middle-out tensor contraction. According to IBM, this approach proves more efficient than tensor-network simulation of the original circuit.

Implementation Roadmap

The company outlines a three-phase roadmap for QCSC evolution:

- Phase 1 (Current): Quantum as an HPC Co-processor. Quantum systems act as specialized compute-offload engines within existing HPC setups. This phase lays the foundation with QRMI extensions to Slurm, basic Ethernet connectivity, and emerging closed-loop applications. Initial deployments at RIKEN and RPI show on-premises integration with current HPC systems.

- Phase 2: Heterogeneous Quantum-Classical Systems. Tighter coupling with dedicated co-designed scale-up infrastructure in close proximity to quantum systems. Specialized interconnects (e.g., Ultra Ethernet, NVQLink, UALink) provide ultra-low latency for real-time feedback loops. Resource management evolves to handle unified scheduling across heterogeneous resources with complex dependency and timing constraints.

- Phase 3: Fully Co-designed Systems. Quantum and classical resources architected as unified platforms from inception. IBM draws explicit parallels to GPU evolution, from PCIe-attached accelerators to tightly integrated systems like NVIDIA Grace Blackwell. This phase envisions unified programming models, integrated system software, and hardware optimized for quantum-classical synergy.

Validation Results

IBM and its partners cite several recent results as validation of the QCSC approach:

- Cleveland Clinic simulated a 300-atom tryptophan-cage mini-protein, described as one of the largest molecular models executed on a quantum-centric supercomputer. The work employed wave-function-based embedding (EWF) to fragment the molecule’s Hamiltonian.

- RIKEN and IBM achieved what they characterize as one of the largest quantum simulations of iron-sulfur clusters through closed-loop data exchange between an IBM Quantum Heron processor and all 152,064 compute nodes of RIKEN’s Fugaku supercomputer.

- Researchers from IBM, RIKEN, and the University of Chicago published results indicating SKQD outperformed state-of-the-art classical methods on certain ground-state energy problems.

- Algorithmiq, Trinity College Dublin, and IBM published methods in Nature Physics for simulating many-body quantum chaos systems using classical compute resources for noise mitigation.

Analysis

For HPC practitioners and computational scientists, IBM’s QCSC reference architecture provides a clear pathway to adopting quantum capabilities without a complete overhaul of their infrastructure.

The framework’s focus on integration with existing tools, such as Slurm scheduling, standard networking protocols, and familiar programming constructs, lowers barriers to adoption compared to standalone quantum methods:

- The QRMI interface enables quantum resources to be used as schedulable HPC assets, potentially making job management easier for hybrid workloads.

- Practitioners working in chemistry, materials science, and molecular simulation may find algorithms like SQD immediately useful because they offload exponentially-scaling quantum components while maintaining familiar classical workflows for pre- and post-processing.

- An open architectural specification openly allows for evaluation and adaptation without vendor commitment.

For technology leaders assessing quantum computing investments, the announcement shows that quantum computing is moving from isolated laboratory demonstrations to integrated computational infrastructure.

Institutions should evaluate QCSC readiness based on three criteria:

- Existing HPC infrastructure maturity and willingness to extend it with specialized interconnects and co-located systems.

- Domain alignment with validated use cases, primarily computational chemistry and molecular simulation.

- Tolerance for multi-year capability development timelines with uncertain return horizons.

The significant classical infrastructure investments needed to support meaningful quantum workloads should not be underestimated. This is not just a software update but a fundamental expansion of computational architecture that requires ongoing commitment and expertise development.

Overall, IBM’s quantum-focused supercomputing reference architecture makes a significant technical contribution to the growing field of hybrid quantum-classical computing. The framework addresses real integration challenges, including resource management, workflow orchestration, security, and system monitoring, which institutions will encounter when trying to integrate quantum processors into existing HPC infrastructure.

IBM, with this reference architecture, continues to showcase its leadership in quantum computing. This marks a significant step toward the commercialization of quantum technology.