Enterprise AI investment has reached a critical inflection point. Spending on AI infrastructure, platforms, and services is projected to exceed $200 billion annually by 2028. Yet most of that capital is flowing toward a visible, contested layer of the market: foundation models, AI-powered applications, and front-end tooling.

The less-examined question is who captures durable value when the application landscape consolidates, and model commoditization sets in.

IBM’s recent Q1 2026 earnings results offer a clear answer to that question, at least from IBM’s perspective. The headline numbers were solid, with IBM reporting 6% revenue growth, expanding margins, strong free cash flow, and software acceleration.

The more consequential signal, however, came from the strategic narrative beneath those figures. IBM is not competing for the most visible positions in AI, but rather building the infrastructure layer that enterprises will ultimately depend on, regardless of their more tactical AI choices.

IBM’s Q1 results are worth examining not for what grew, but for why the architecture of its business is becoming more relevant, not less, amid accelerating AI adoption.

Mainframe as AI Engine

One of the more counterintuitive dynamics IBM described on the earnings call concerns the mainframe’s role in an AI-driven environment. Rather than losing relevance to cloud-native AI infrastructure, IBM Z is taking on a new role as an inference engine embedded directly in transactional systems.

The company cited use cases in which AI models run in-line with high-volume transaction streams, including capabilities such as fraud detection, payment authorization, and claims processing, without requiring data to leave the platform.

The economics of this model differ significantly from those of centralized AI inference. Three outcomes define the advantage of IBM’s approach:

- AI inference applies to 100% of transactions, not to sampled subsets, enabling comprehensive decisioning at scale.

- Latency drops to milliseconds (because inference runs where the data already lives).

- Compute demand, what IBM tracks as “AI MIPS”, increases directly on the platform, driving utilization and revenue.

The implication is direct. In transactional environments, AI is increasing the value of existing IBM infrastructure rather than displacing it. This is not a temporary dynamic.

As inference becomes a standard component of transaction processing, demand for purpose-built infrastructure rises accordingly.

Avoiding the Application Layer, Deliberately

During the earnings call, IBM management made an explicit point that only a small fraction of its portfolio qualifies as applications. The remainder falls into what the company calls “enabling software,” which includes data platforms, middleware, automation, and infrastructure.

IBM’s focus within the AI stack centers on data access and movement, model orchestration, workflow automation, and hybrid infrastructure. These layers sit between raw compute and user-facing applications.

They are also the layers that enterprises cannot easily replace or bypass, regardless of the foundation models or applications they choose.

IBM’s architecture and approach make the company largely model-agnostic and application-neutral. Enterprises running OpenAI models, Google Gemini, or open-source alternatives still need data pipelines, governance tooling, and hybrid orchestration.

IBM is building to serve that need, not to compete at the model or interface layer, where switching costs are lower and margins are under more pressure.

AI Revenue Is No Longer Theoretical

Many enterprise technology vendors are still translating the AI pipeline into bookings, and bookings into revenue. IBM’s Q1 results show that it’s further along that curve.

CEO Arvind Krishna said on the call that AI-related software is contributing meaningfully to current growth, with a run rate exceeding $1 billion and continuing to expand. In consulting, AI now accounts for approximately 30% of backlog and a growing share of recognized revenue.

This matters because the transition from experimentation to operationalization is the hardest step in enterprise AI deployment. Proving that AI is embedded in core offerings, service delivery, and infrastructure consumption, rather than sitting in a separate AI product line, is meaningful evidence that IBM’s approach is translating to production use.

IBM also highlighted internal AI deployment as a margin driver, reporting several billion dollars in productivity savings over the past two years. The company is using its own automation and AI tools to accelerate software development, modernize operations, and reduce service delivery costs.

This creates a compounding dynamic where internal efficiency gains fund faster product development, which in turn drives external revenue.

The Competitive Landscape

IBM’s AI infrastructure strategy occupies a distinct position relative to the major hyperscalers and platform vendors. Microsoft, Google, and Amazon are competing aggressively at the application and model layer, integrating AI into productivity suites, development tools, and cloud services at scale.

Oracle is building tightly integrated AI capabilities into its database and cloud infrastructure.

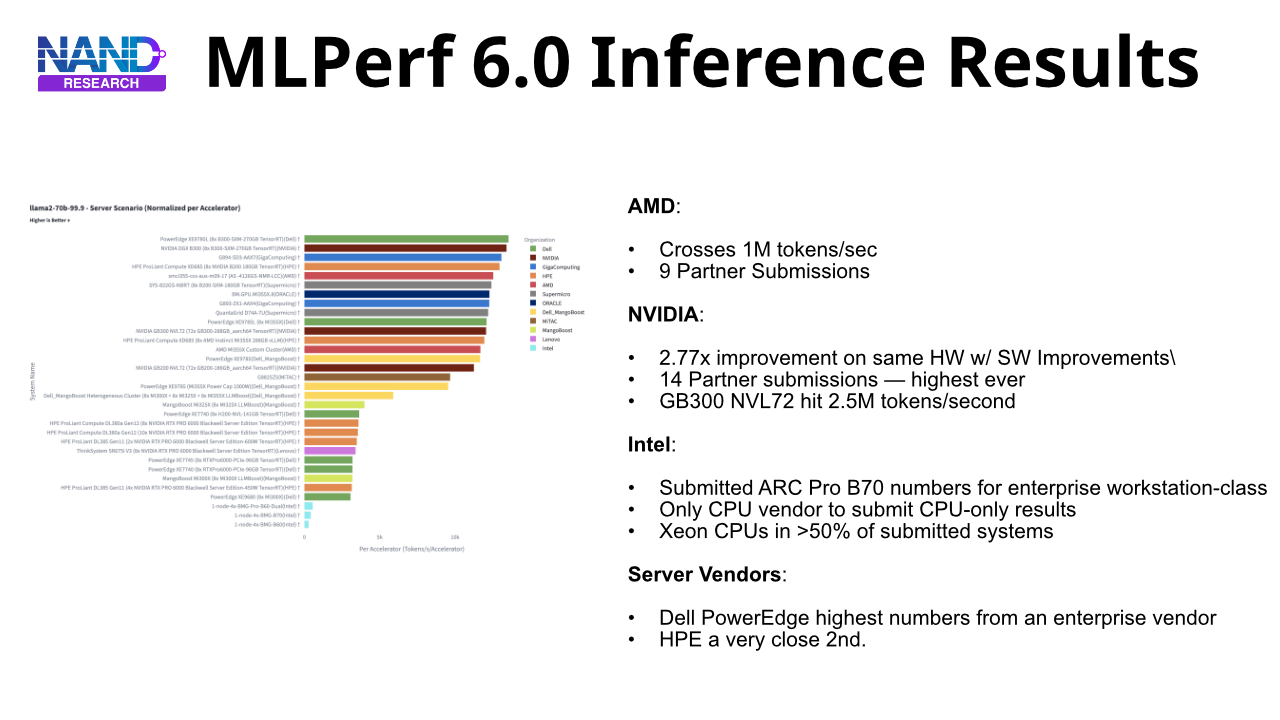

Meanwhile, Dell and HPE are focused on on-premises AI infrastructure for inference and training workloads.

IBM is not directly competing in most of these categories. Its differentiation lies in hybrid and multi-cloud orchestration, enterprise data governance, and the specific requirements of regulated industries, including financial services, healthcare, and government, where data sovereignty and transaction integrity are operational requirements.

Its recent acquisition of Confluent strengthens this position. Framed on the call as “foundational” for agentic AI architectures rather than as a conventional data integration play, Confluent addresses the need for continuously streaming, governed data feeding models and agents across heterogeneous environments.

Final Thoughts: What to Watch Over the Next Year

The emerging shift toward agentic AI architectures, where AI systems operate continuously across enterprise workflows rather than responding to discrete queries, plays directly to IBM’s strengths in data orchestration and hybrid infrastructure.

As agent-based systems mature, static datasets become insufficient and real-time data pipelines become load-bearing components of the AI stack. IBM’s position in that infrastructure layer will be tested by whether Confluent integration, watsonx orchestration, and IBM Z capabilities converge into a coherent platform that enterprises adopt at production scale.

Sovereign AI is a second theme worth tracking. Krishan emphasized on the call that enterprises and governments are increasingly focused on where AI workloads run, who controls the infrastructure, and whether systems remain resilient to external disruption. This marks a fundamental shift toward strategic IT autonomy, and IBM’s on-premises and hybrid portfolios are well-positioned to address it.

Over the next two years, IBM’s central question is whether the connective tissue strategy, which builds the integration, governance, and orchestration layer beneath competing AI ecosystems, delivers durable differentiation or merely delays disintermediation.

The answer hinges on whether enterprises view IBM’s hybrid and data management capabilities as genuinely irreplaceable, or whether hyperscaler platforms eventually absorb enough of that functionality to reduce IBM to a legacy infrastructure vendor in a long decline.

Q1 2026 results do not fully resolve that question, but they confirm that IBM is executing its chosen strategy with discipline, that AI is contributing to current financial performance (beyond just the pipeline), and that the market segments IBM is targeting, including regulated industries, hybrid environments, and transactional AI workloads, are becoming more relevant.

For enterprise technology buyers, that makes IBM a more important vendor to evaluate carefully, not simply a legacy incumbent to manage.