At NVIDIA GTC 2026, Cisco announced a significant expansion of its Cisco Secure AI Factory with NVIDIA, broadening its validated AI infrastructure architecture from centralized data centers to enterprise and service provider edge deployments. The announcement includes hardware-accelerated security, updated switching silicon, new Cisco Validated Designs (CVDs), and a formalized multi-agent reference architecture.

The announcements show Cisco tackling operational and security challenges that arise as AI inference shifts from central data centers to distributed, latency-sensitive sites such as warehouses, retail stores, hospitals, and service provider networks.

The Secure AI Factory with NVIDIA, first introduced at GTC 2025, was built around Cisco AI PODs, a modular, validated reference architecture that combines Cisco networking and compute with NVIDIA AI infrastructure and software. This year’s announcement expands that architecture to the edge with support for the NVIDIA RTX PRO 4500 Blackwell Server Edition, the Cisco Unified Edge platform, and a new Cisco AI Grid with NVIDIA reference design for service providers.

The announcement also includes Cisco AI Defense support for NVIDIA’s OpenShell runtimes within the NVIDIA Agent Toolkit, expanding governance controls to agent-based tool-use scenarios.

Technical Details

The announcement covers five different technical areas: next-generation switching silicon, hardware-accelerated security, edge compute expansion, ecosystem software updates, and a new multi-agent reference architecture.

Together, they represent an incremental broadening of the Secure AI Factory blueprint rather than a ground-up redesign.

Networking & Silicon

Cisco adds a new tier of switching capacity to its AI factory portfolio with the N9100, a 102.4 Tbps switch powered by NVIDIA Spectrum-6 Ethernet switch silicon.

The N9100 expands Cisco’s NVIDIA Cloud Partner Reference Architecture (NCP RA)-compliant portfolio. The current N9100, based on Spectrum-4 silicon and rated for 800G scale-out deployments, is now generally available.

The newer Spectrum-6 variant and the N9300 based on Cisco Silicon One G300 silicon are positioned as the performance ceiling for the most demanding AI factory configurations, with P200-based deep-buffer scale-out switches rounding out the portfolio for traffic patterns requiring high buffer capacity.

Hardware-Accelerated Security

Cisco integrates its Hybrid Mesh Firewall technology into the NVIDIA BlueField DPU platform on AI servers connected to Nexus One fabrics. This shifts firewall policy enforcement from dedicated appliances into the server data path via the DPU, enabling zero-trust micro-segmentation of AI workloads at the hardware level.

This allows security policy to follow individual workloads rather than being applied at the network perimeter.

Edge Compute & Platform Expansion

Cisco expands the Secure AI Factory to the edge with two different deployment options. The enterprise edge level focuses on deployments on the Cisco Unified Edge platform, which includes firmware roots of trust, locking bezels with intrusion detection, and Intel TDX/SGX confidential computing support.

The edge tier for service providers is addressed by the Cisco AI Grid with NVIDIA, a reference design that combines Cisco’s Mobility Services Platform with NVIDIA RTX PRO Blackwell Series GPUs. Cisco says that this design allows service providers to deliver managed edge AI services with carrier-grade reliability and sovereignty over their current network infrastructure.

Specific edge capabilities include:

- Support for NVIDIA RTX PRO 4500 Blackwell Server Edition across the Cisco UCS portfolio and Cisco Unified Edge.

- Cisco Unified Edge nodes configured with 3-4 servers per site, running NVIDIA L4 GPUs for inference and computer vision workloads at the edge.

- Centralized lifecycle management of edge nodes through Cisco Intersight, providing unified operational visibility across distributed deployments.

AI Defense & Multi-Agent Security

Cisco AI Defense provides the security layer for multi-agent workloads across both the core and edge tiers. The system operates across three functional layers:

- Model scanning: AI Defense scans LLMs and SLMs via their exposed APIs and endpoints, identifies vulnerabilities in model files, and generates an AI Bill of Materials (AI-BOM) to support supply chain integrity.

- Runtime guardrails: Real-time prompt and response sanitization that detects prompt-injection attempts, prevents toxic content generation, and blocks the exfiltration of PII, PHI, and PCI data.

- NVIDIA Agent Toolkit integration: AI Defense supports NVIDIA’s OpenShell runtimes, adding governance controls and guardrails to agentic tool-use and claw actions, with continuous monitoring of every tool and API invoked by an agent.

Deployment Tooling & Ecosystem

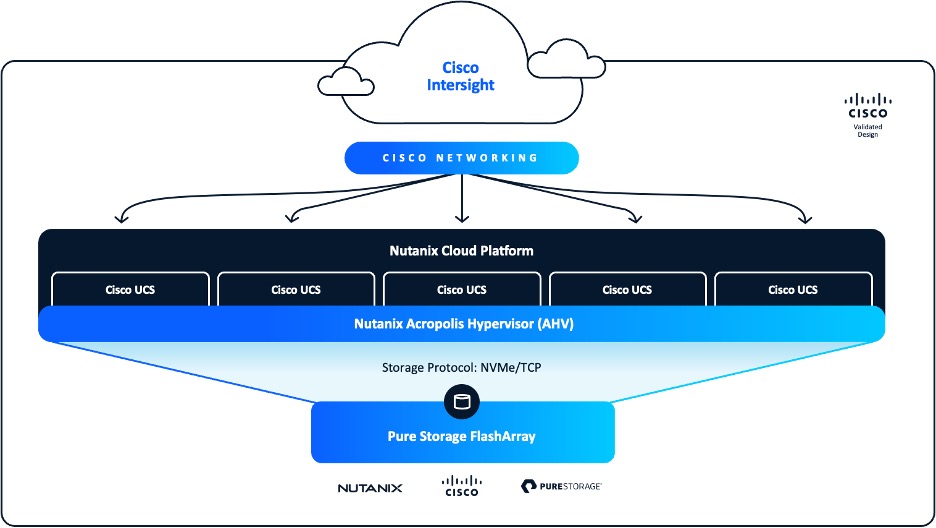

Cisco announced three new CVDs focused on AI model training on FlashStack, FlexPod, and VAST Data, along with a fourth CVD for VAST Data training and fine-tuning use cases.

The Secure AI Factory now also supports Red Hat AI Factory software, integrating Red Hat and NVIDIA technologies with optimized microservices and open-source AI development frameworks. This broadens the software options available to practitioners alongside the existing NVIDIA AI Enterprise pathway.

Analysis

Enterprise IT teams and AI infrastructure architects are the primary beneficiaries of the Secure AI Factory expansion, particularly those managing distributed AI deployments across multiple sites.

Cisco’s validated reference architecture model reduces the integration burden that has historically made edge AI deployments costly and slow to stand up, while CVDs eliminate trial-and-error configuration work and provide a tested, repeatable deployment path from core to edge.

Practical implications for practitioners include:

- Deployment acceleration: Cisco claims deployments can be compressed from months to weeks using AI PODs and CVDs as the blueprint. Independent validation of this claim is not yet available, and actual timelines will depend on organizational readiness, network complexity, and the degree to which existing infrastructure deviates from the reference architecture.

- Simplified Security Operations: Integrating Hybrid Mesh Firewall policies into BlueField DPUs brings security enforcement closer to workloads and decreases dependency on standalone appliances. However, it also requires operational familiarity with DPU-based security management, which is not yet common in most IT teams.

- Multi-agent deployment readiness: The AI Defense runtime guardrails and model scanning capabilities solve real security gaps in agentic workflows, but organizations still need to define their own agent governance policies. AI Defense offers the enforcement layer; policy creation remains the organization’s responsibility.

- Ecosystem lock-in considerations: The architecture is closely co-designed with NVIDIA. Organizations that prioritize hardware flexibility across different AI GPU vendors will need to evaluate the portability of their operational workflows and CVD-based configurations outside the Cisco-NVIDIA ecosystem.

Market Positioning

The Secure AI Factory expansion supports Cisco’s strategy of differentiating on security and operational simplicity rather than just raw computing power. While NVIDIA and its compute-oriented partners emphasize GPU density and throughput benchmarks, Cisco emphasizes validated integration, embedded security, and lifecycle management.

Cisco’s GTC 2026 announcements extend this positioning from the data center to the edge tier, where competitive differentiation increasingly matters as inference workloads are distributed.

Several items are worth noting:

- Security as a structural advantage: Cisco presents security not as an optional feature but as an essential part of its architecture, integrated at every layer of the stack. The combination of AI Defense with NVIDIA’s Agent Toolkit reinforces this position and gives Cisco a strong claim in the agentic AI security space that most networking vendors cannot currently rival.

- Core-to-edge continuity: The capability to deliver a unified operating model, security stance, and management plane from data center AI PODs to Cisco Unified Edge nodes is a true architectural benefit. Most competitors need separate toolchains for edge and core deployments.

- Service provider extension: The Cisco AI Grid with NVIDIA reference design targets a market segment that hyperscaler-centric AI factory architectures have not directly addressed. Service providers with existing network infrastructure represent a large addressable market for managed edge AI services.

Final Thoughts

Cisco’s announcements expand the Secure AI Factory from a data center reference architecture into a comprehensive, core-to-edge infrastructure platform. The updates include hardware-accelerated firewall enforcement with BlueField DPUs, AI Defense integration using the NVIDIA Agent Toolkit, edge compute support via Cisco Unified Edge, and a service provider-focused AI Grid reference design. This illustrates a clear strategy for supporting AI inference workloads wherever they are deployed.

For enterprise buyers evaluating AI infrastructure strategies, the Cisco Secure AI Factory with NVIDIA offers the most comprehensive, validated architecture for organizations seeking security embedded at every layer of the AI stack. The addition of agentic AI security controls, particularly the runtime guardrails and model scanning within AI Defense, addresses a gap that most competing platforms have yet to close.

Cisco is building infrastructure for the operational reality of enterprise AI: distributed, multi-agent, and requiring governance at a level that purpose-built AI compute vendors have not prioritized.