Dell Technologies announced a major expansion of its AI Data Platform at the recent NVIDIA GTC 2026 event. Dell’s AI Data Platform serves as the data foundation layer of its Dell AI Factory with NVIDIA. The announcement highlights three new architectural pillars:

- Data Orchestration Engine built on Dataloop technology (acquired in December 2025)

- GPU-accelerated analytics embedded directly into the data layer

- Two new high-performance storage innovations, Lightning File System and Exascale Storage

Together, these components address the most common barrier to enterprise AI deployment: the inability to prepare, move, and govern data at the speed and scale AI workloads demand.

The platform update is significant, as it elevates Dell beyond its traditional role as a hardware infrastructure provider into the data intelligence and orchestration layer. Competing storage vendors, including NetApp, VAST Data, HPE, and IBM, announced support for the NVIDIA STX architecture at the same event, narrowing the differentiation window at the storage level.

Dell’s strategy is to stand out by integrating data management, pipeline orchestration, GPU acceleration, and storage into one comprehensive platform, focusing on breadth over specialized solutions.

Dell AI Data Platform with NVIDIA

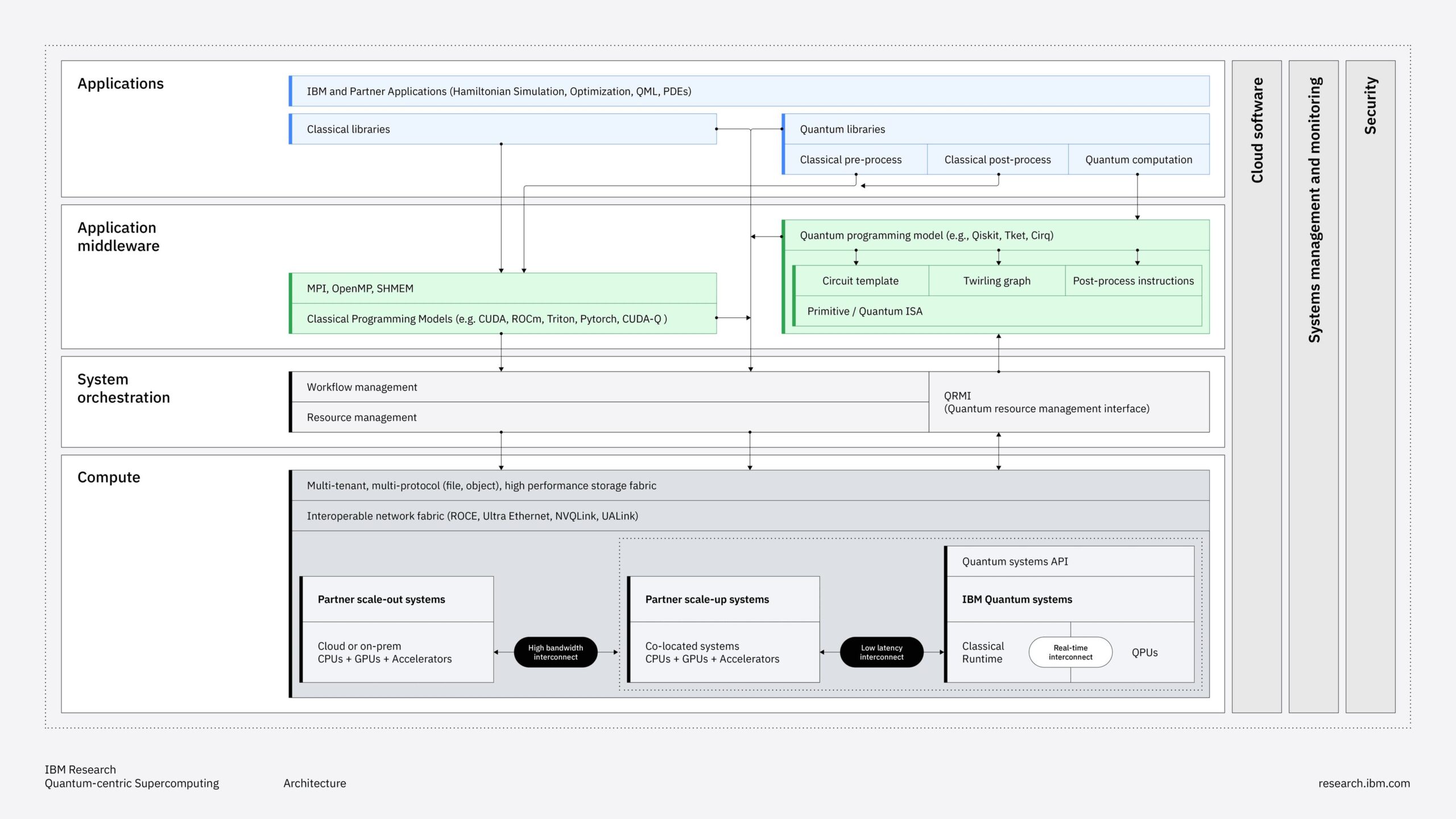

The Dell AI Data Platform with NVIDIA focuses on data orchestration, GPU-accelerated analytics, high-performance parallel file storage, and exascale multi-protocol storage capabilities. Each of these layers addresses a specific bottleneck in the enterprise AI pipeline, from raw data discovery to GPU-speed inference delivery.

All components run on Dell’s PowerEdge servers and integrate with NVIDIA’s networking, GPU, and software ecosystem.

Data Orchestration Engine

The Data Orchestration Engine is built on technology from Dell’s recent acquisition of Dataloop, an Israeli AI data pipeline management company. The engine automates the entire AI data lifecycle, from discovery to governance, offering both no-code and low-code interfaces for data teams without extensive engineering resources.

Key capabilities include:

- Automated discovery and ingestion of structured, unstructured, and multimodal data sources across heterogeneous enterprise environments

- Data labeling, enrichment, and transformation pipelines that produce governed, AI-ready datasets

- Active learning and human-in-the-loop review workflows, allowing iterative improvement of dataset quality without sacrificing governance or compliance controls

- A Data Orchestration Engine Marketplace offering a curated library of pre-built data workflows, more than 200 models, application templates, and NVIDIA NIM microservices

- Native support for NVIDIA AI Blueprints and the NVIDIA AI-Q blueprint, enabling the building of customizable AI agents capable of reasoning over corporate knowledge bases.

- Metadata-layer orchestration that operates across Dell and non-Dell storage systems, a design choice that reduces lock-in risk relative to single-vendor orchestration solutions

GPU-Accelerated Data Processing

Dell integrates NVIDIA RTX PRO Blackwell Server Edition GPUs directly into its data analytics layer, applying GPU parallelism to workloads that have traditionally relied on CPU-based processing. The platform uses NVIDIA’s CUDA-X libraries across three capabilities.

- cuDF (CUDA DataFrame) for structured data processing: Dell claims up to 3x faster SQL query execution than CPU-based systems, based on internal testing with the Qwen3-32B model.

- cuVS (CUDA Vector Search) for vector indexing and search on unstructured data: Dell claims up to 12x faster vector indexing compared to CPU baselines, based on internal testing against Elasticsearch results from December 2025.

- Dell Analytics Engine AI Assistant: a natural-language interface integrated within the analytics layer that enables business users to query and visualize managed data without SQL expertise, promoting democratization of data access across business units.

Lightning File System

The Lightning File System is Dell’s new parallel file system designed specifically for AI training and inference environments.

Key characteristics of Lightning:

- Throughput: Dell claims up to 150 GB/s per rack unit for sequential and random read I/O.

- Performance: Dell claims up to 20x better performance than traditional flash-only scale-out file systems and up to 2x higher per-rack-unit throughput than competitors. No third-party benchmarking has been published yet.

- Fabric-based architecture with direct storage access, designed to prevent I/O bottlenecks that reduce GPU utilization during training runs

- NVIDIA integration: native connectivity with NVIDIA ConnectX and BlueField infrastructure within the Dell AI Factory ecosystem

Exascale Storage

Exascale Storage is a software-defined, three-in-one storage architecture. It merges three storage types on a single Dell PowerEdge server platform.

- Dell PowerScale (file storage), Dell ObjectScale (object storage), and Dell Lightning File System (parallel file storage) run concurrently on shared hardware, allowing customers to choose storage types based on workload needs without having to buy separate infrastructure.

- NVIDIA SuperNIC connectivity: support for NVIDIA CX-8 and CX-9 SuperNICs with planned connectivity of up to 800 GbE

- NVIDIA CMX context memory storage and KV cache offloading: enables offloading LLM context from GPU VRAM to high-speed shared storage, extending effective context length for long-context and agentic AI workloads without increasing GPU memory requirements.

- Availability: targeted for early 2H 2026

Analysis

For data engineers and AI infrastructure teams, the most immediate practical effect of this announcement is the Data Orchestration Engine. Companies that have spent time building manual data labeling, enrichment, and pipeline workflows now have a vendor-provided alternative.

Its no-code and low-code interfaces reduce the skill barrier for preparing data for AI, tackling a real bottleneck in organizations where data engineering capacity lags behind AI project ambitions.

Several practical considerations apply:

- The metadata-layer orchestration model allows the engine to work across existing storage investments, decreasing the need to standardize on Dell storage to leverage the orchestration capabilities.

- GPU-accelerated SQL and vector search capabilities require NVIDIA RTX PRO Blackwell Server Edition hardware. Organizations without current-generation NVIDIA server GPUs need to make extra capital investments before experiencing the claimed performance improvements.

- Offloading KV cache to Exascale Storage addresses a key challenge in deploying long-context LLMs at scale. GPU VRAM is limited and costly; transferring KV cache to fast shared storage extends model capacity without expanding GPU hardware. This is an important capability for agentic AI deployments.

Market Position

Dell’s big move in this announcement is to redefine its market identity. Traditionally known as a hardware infrastructure vendor with an extensive product lineup, Dell now positions itself as an end-to-end enterprise AI platform provider.

The announcement of the AI Data Platform is the clearest expression of that reframing so far, combining orchestration software, GPU-accelerated analytics, and storage innovations into a single platform narrative.

Several aspects deserve highlighting:

- Dell’s breadth advantage is genuine: few vendors can credibly offer servers, storage, networking, professional services, and now data orchestration software under a single sales motion. This matters to large enterprise buyers seeking to minimize integration complexity across the AI pipeline.

- The on-premises positioning is intentional and well-timed: Dell highlights a trend over several years that show CIOs prefer to develop AI capabilities internally, on-premises, driven by data sovereignty, security needs, and TCO considerations.

- Risk of over-reliance on NVIDIA: The platform is built around NVIDIA’s compute, networking, and software at every level. Customers adopting the full stack become dependent on NVIDIA’s pricing and availability. This is a key concern, especially given recent GPU supply issues and the rising, more aggressive competition in enterprise inference. NVIDIA is dominant now, but that could change (though Dell’s use of “…with NVIDIA” as part of the solution brand suggests they are already thinking ahead).

Competitive Landscape

NVIDIA GTC 2026 served as a staging ground for almost every major enterprise infrastructure vendor, and Dell’s AI Data Platform announcement did not happen in isolation. The competition in the storage and AI infrastructure sectors is intensifying, with the differentiation Dell created through its early AI Factory program facing increasing pressure from multiple fronts.

Dell’s biggest competitive risk lies in the software and orchestration layer. A hardware-focused vendor promoting a four-month-old acquisition as a platform orchestration engine will likely face skepticism from buyers who have already adopted Databricks, Snowflake, or cloud-native orchestration tools.

Dell must show that the Dataloop technology integrates seamlessly with existing enterprise data systems rather than necessitating the replacement of current platforms…

Full competitive analysis is available to NAND Research clients and IT advisory members.

Final Thoughts

The Dell AI Data Platform with NVIDIA is a significant strategic shift for Dell. Combining a data orchestration engine, GPU-accelerated analytics, Lightning File System, and Exascale Storage into a unified platform narrative provides Dell with a strong case for its position in the AI infrastructure stack above the hardware layer.

The 4,000-customer installed base for the Dell AI Factory demonstrates real commercial validation, and the KV cache offloading capability via Exascale Storage offers a technically distinct solution to an emerging constraint in deploying production agentic AI.

For enterprise technology buyers, the most important signal in this announcement is not any single product capability but what it implies about where the AI infrastructure market is heading. The data layer is becoming the primary battleground for enterprise AI value, and vendors that control both the storage performance and the orchestration intelligence above it will have significant leverage over AI project outcomes.

For hyperscalers and neocloud buyers, Dell offers exactly what the market needs. This is Lightning’s target audience, filling a competitive gap previously served by smaller rivals like WEKA and VAST Data. It’s also here that Dell achieves a competitive advantage over more traditional enterprise storage competitors that lack a scalable parallel file system.

Overall, Dell’s vertically integrated approach provides it with a structural advantage among large enterprises that prefer consolidating vendors. Organizations evaluating on-premises AI infrastructure this year should consider the Dell AI Data Platform for their shortlist, clearly understanding what is currently available and what is still upcoming.