At NVIDIA GTC 2026, HPE announced a broad expansion of its portfolio, including AI factory infrastructure, supercomputing platforms, and enterprise storage. These announcements collectively strengthen HPE’s role as a comprehensive NVIDIA-aligned infrastructure provider, extending the NVIDIA AI Computing by HPE portfolio with hardware based on the Vera Rubin and Blackwell GPU architectures, new CPU compute options, updated networking, and various software and services integrations.

HPE announced that its Alletra Storage MP X10000 has achieved NVIDIA-Certified Storage Foundation-level validation for object-based systems, making it the first object storage platform to earn this designation.

Separately, HPE launched next-generation rack-scale AI systems, a new high-density GPU server, its first NVIDIA Vera CPU compute blade for HPE Cray supercomputers, and expanded software integrations, including NVIDIA Mission Control, Red Hat AI Enterprise, and multi-tenancy support through NVIDIA Multi-Instance GPU.

Announcement Details

HPE’s GTC 2026 announcements cover four main areas: supercomputing platform updates, AI factory systems, storage certification, and software integrations. The entire portfolio aims at environments ranging from enterprise AI deployments to exascale supercomputing.

Supercomputing Platform: HPE Cray Supercomputing GX5000

HPE introduces two major features to its second-generation exascale HPE Cray Supercomputing GX5000 platform, built to unify AI and HPC workloads:

- HPE Cray Supercomputing GX240 Compute Blade: HPE describes this as one of the first compute blades built around NVIDIA’s Vera CPU. Each blade supports up to 16 NVIDIA Vera CPUs in a liquid-cooled form factor. HPE claims industry-leading density on the Vera platform, with configurations scaling to 40 blades per rack, delivering 640 Vera CPUs and 56,320 NVIDIA Olympus Arm-compatible cores. Availability is expected sometime in 2027.

- NVIDIA Quantum-X800 InfiniBand: HPE adds Quantum-X800 as a networking option on the GX5000. The switches provide 144 ports of 800 Gb/s connectivity, with power-efficiency features including low-power link state and power profiling. Availability on the GX5000 platform is also expected in 2027.

AI Factory Systems

HPE expanded its HPE AI Factory portfolio with two new systems aimed at large-scale service provider and AI deployments, along with an extension of Blackwell GPU availability:

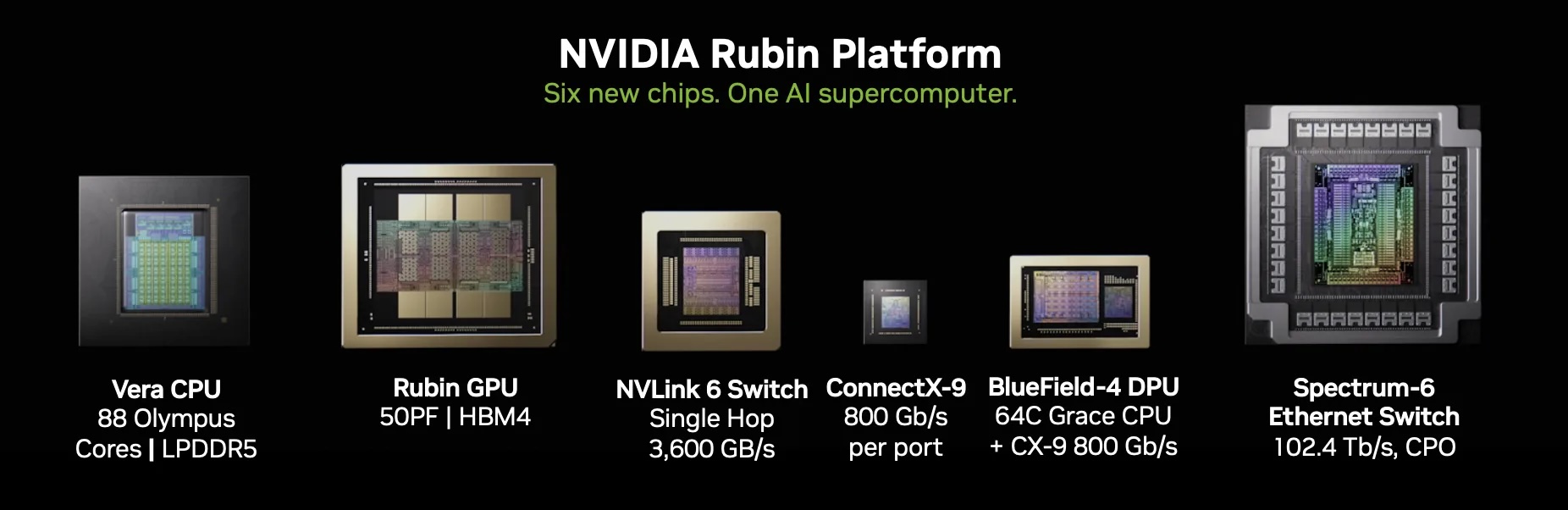

- NVIDIA Vera Rubin NVL72 by HPE: A rack-scale system designed for frontier-scale AI models exceeding one trillion parameters. The system integrates 36 NVIDIA Vera CPUs and 72 NVIDIA Rubin GPUs, sixth-generation NVIDIA NVLink scale-up networking, NVIDIA ConnectX-9 SuperNIC, and NVIDIA BlueField-4 DPUs. HPE incorporates its liquid–cooling integration and data center design services into its offering. This system targets neo-cloud service providers. Availability is expected in December 2026.

- HPE Compute XD700: A new AI server inspired by the Open Compute Project, built on NVIDIA HGX Rubin NVL8, designed for AI model training and inference workloads. Each rack configuration can support up to 128 Rubin GPUs. HPE says the new server provides double the GPU density per rack compared to the previous-generation HPE ProLiant Compute XD685. Availability is expected in early 2027.

- NVIDIA RTX PRO 6000 Blackwell Server Edition: HPE makes these Blackwell-architecture GPUs available across the full HPE AI Factory portfolio. This option is available today.

HPE Alletra Storage MP X10000: NVIDIA-Certified Storage

HPE Alletra Storage MP X10000 has earned NVIDIA-Certified Storage validation for object-based systems at the Foundation level. The Foundation level assesses storage platforms for AI workloads that scale up to 128 GPUs, including benchmarking and functional testing for enterprise-grade availability and reliability.

The X10000 is a scale-out object storage platform. Key characteristics relevant to the certification include:

- Scale-out architecture with independent scaling of performance and capacity

- Integrated data intelligence capabilities for AI data pipeline preparation and enrichment

- S3-compatible object storage designed for large unstructured data workloads such as AI training datasets and model checkpoints

- Designed to feed data to NVIDIA accelerated computing environments with throughput sufficient to maintain GPU utilization at scale

The certification confirms the platform can support model training, lower-latency inference, and improved GPU utilization.

Software and Services Integrations

Several software integrations round out the GTC 2026 portfolio expansion and are relevant to enterprise buyers evaluating full-stack deployment options:

- NVIDIA Mission Control: HPE AI Factory at-scale and sovereign configurations will support NVIDIA Mission Control software for workload orchestration, monitoring, and autonomous recovery. This includes NVIDIA Run:ai for workload management and NVIDIA Dynamo for inference optimization. General availability is planned for later in 2026.

- Red Hat Integration: HPE AI Factory now supports Red Hat Enterprise Linux and Red Hat OpenShift as part of the Red Hat AI Enterprise stack, integrating with NVIDIA AI Enterprise. This option is available today.

- Multi-Tenancy via NVIDIA MIG: HPE adds multi-tenancy support to the AI Factory portfolio through NVIDIA Multi-Instance GPU with GPU passthrough, enabled by SUSE Virtualization and SUSE Rancher Prime. The integration supports both hard and soft tenancy models for Kubernetes and VM-based workloads. Availability is targeted for Spring 2026.

Analysis

HPE is executing a consistent strategy, positioning itself as the preferred full-stack NVIDIA partner for enterprise and sovereign AI deployments, with differentiation built on its supercomputing heritage, liquid-cooling expertise, and validated integration across NVIDIA’s hardware and software stack.

The GTC 2026 announcements reinforce this positioning across both the infrastructure hardware and software orchestration layers.

Several items worth noting:

- The NVIDIA Cloud Partner endorsement creates a commercial pathway for service providers to use HPE AI Factory as the foundation for NVIDIA-validated cloud AI services. This is a meaningful differentiator in the neo-cloud and sovereign cloud markets where third-party validation matters to end customers.

- The Alletra Storage MP X10000 certification extends HPE’s AI infrastructure story from compute and networking into storage, completing a more coherent full-stack narrative. This matters because storage has historically been the weak link in vendor AI factory pitches that emphasize GPUs while treating storage as an afterthought.

- The breadth of the announcement carries a risk: HPE is making commitments across a wide product surface with significant portions not available until 2027.

- The supercomputing credentials (HPE claims three of the world’s top three exascale systems as of November 2025, validated by the TOP500 list) give HPE credibility in the sovereign AI and national laboratory markets that few competitors can match. This heritage translates directly into customer confidence for large-scale procurement decisions.

Competitive Landscape

HPE competes for AI factory and supercomputing infrastructure business against Dell Technologies, Lenovo, and Supermicro in the server and rack-scale systems market, and against Pure Storage, NetApp, VAST Data, and WEKA in the AI storage layer. The competitive picture differs meaningfully by segment.

This section is only available to NAND Research Clients and IT Advisory Members

Final Thoughts

HPE’s GTC 2026 announcements show a vendor that has made a deliberate, multi-year commitment to the NVIDIA ecosystem and is now executing across the full AI infrastructure stack.

The portfolio updates span compute blades, rack-scale GPU systems, high-density AI servers, certified storage, and software orchestration. The depth of integration with NVIDIA’s hardware platforms and software stack is genuine, not superficial, with the Vera Rubin NVL72 and GX240 compute blade co-engineered products, not third-party servers with NVIDIA GPUs added. The Mission Control and Red Hat integrations address the orchestration and operating system layers that enterprise buyers require for production AI deployments.

The Alletra Storage MP X10000 certification carries practical near-term value. Storage qualification is a real friction point in enterprise AI deployment, and a certified object storage platform from a major infrastructure vendor simplifies the procurement and integration process for organizations that have already standardized on HPE.

Overall, this presents a compelling set of updates that ensure HPE remains a key player in AI enterprise infrastructure offerings. Broaden the perspective to include HPE’s AI infrastructure products alongside the company’s strong post-Juniper-acquisition networking offerings, all unified under its GreenLake philosophy, and you’ll see a company set to deliver significant value to enterprise IT.