The focus at NVIDIA GTC 2026 was on developing a unified infrastructure model that vendors are now adopting. The industry has effectively reached a stage where NVIDIA sets the architectural standard, while partners compete on how well they implement it in enterprise and service-provider environments.

Across HPE, Dell, Lenovo, Cisco, IBM, NetApp, Everpure (Pure Storage), WEKA, VAST Data, and MinIO, the announcements follow three consistent patterns:

- Compute and networking are converging toward NVIDIA-designed architectures, especially Blackwell-based systems, MGX modular platforms, Spectrum-X Ethernet, and BlueField DPUs. There is little variation at this level.

- Data infrastructure is becoming crucial for AI performance, especially as workloads shift toward inference, RAG, and agentic AI. Vendors are now explicitly focusing on optimizing token throughput, GPU utilization, and data pipeline latency.

- Operational layers (security, governance, orchestration) are emerging as primary points of differentiation, with Cisco and IBM taking the most explicit positions in these areas.

Key Market Implications

| Theme | Implication |

| Hardware differentiation is narrowing | OEMs compete less on architecture, more on execution and support |

| Data path efficiency is critical | Storage and data vendors become central to AI performance |

| Operational maturity is the deciding factor | Security, governance, and orchestration define vendor selection |

For buyers, the decision is all about which vendor best runs AI infrastructure at scale in our environment.

Foundational NVIDIA Technologies

Before delving into what each core infrastructure vendor announced, let’s focus on what NVIDIA introduced that influenced all partner statements:

- Blackwell and Rubin platform evolution providing rack-scale GPU systems with NVLink domain scaling

- Spectrum-X Ethernet fabric as the default high-bandwidth networking model.

- BlueField-4 DPUs serving as control and data-path offload layers.

Two new architectural constructs are particularly significant:

- STX (Scalable Token eXchange): introduces a storage and memory tier specifically designed for inference workloads.

- CMX (Context Memory): a persistent layer that stores KV cache and agent state across inference sessions.

The key technical shift is making storage part of the inference process, not just during training or data preparation. As inference workloads grow and agentic AI systems need persistent context, the line between memory and storage is disappearing.

Vendor Announcements

This section walks through what was actually announced, vendor by vendor, with an emphasis on architecture, capabilities, and practical implications.

Dell Technologies

Dell delivered one of the most complete infrastructure updates at GTC, expanding its Dell AI Factory with NVIDIA into a more vertically integrated stack.

System-Level Announcements

New PowerEdge XE systems aligned with Blackwell and Rubin, featuring liquid-cooled options for high-density deployments. Rack-scale setups support over 100 GPUs per rack, with seamless integration into NVIDIA NVLink/NVSwitch networks.

Storage and Data Layer

Dell made two notable moves in storage:

- The “Lightning” parallel file system, a tier-0 storage layer explicitly designed for ultra-low-latency data access during training and inference.

- Expanded Dell ObjectScale and PowerScale integration to create a multi-tier architecture: object storage for data lakes and ingestion, file storage for training and high-throughput workloads, and parallel file for latency-sensitive AI pipelines.

Data Orchestration Engine

Dell also introduced a Data Orchestration Engine, built from its Dataloop acquisition, that continuously prepares and labels data, feeds AI pipelines in real time, and reduces manual data engineering overhead.

Assessment: Dell is developing one of the most comprehensive “AI factory” stacks in the market. The challenge lies in operational complexity. Customers still need to integrate multiple storage tiers, orchestration layers, and AI frameworks into a unified system.

HPE

HPE concentrated on extending its Private Cloud AI platform and tightening integration with NVIDIA’s reference architectures.

HPE announced expansion of Private Cloud AI to support NVIDIA Blackwell GPUs, including RTX PRO variants for edge and enterprise inference. Scaling configurations support up to approximately 128 GPUs per deployment unit, with integrated networking and storage.

HPE also announced validation of Alletra MP X10000 object storage as NVIDIA-certified storage.

The company emphasized integration with GreenLake consumption model, support for sovereign AI deployments, and unified management through Compute Ops Management.

HPE is not adding new infrastructure primitives but rather integrating NVIDIA architectures into enterprise-ready systems and enhancing lifecycle management and flexibility in usage. This is well-aligned with its broader GreenLake strategy.

Assessment: HPE’s strength is in operationalization and enterprise alignment. Its differentiation relies less on raw performance and more on deployment model, governance, and support.

Lenovo

Lenovo built its GTC presence around production inference economics and global scalability.

- Introduced new systems based on Blackwell and Rubin architectures, including support for NVL72-scale configurations.

- Expanded Hybrid AI Advantage with NVIDIA, combining on-prem and cloud deployments. Introduction of smaller-scale inference platforms using RTX PRO GPUs.

Lenovo also emphasized deployment of AI cloud “gigafactories” and strong positioning in sovereign AI markets.

Assessment: Lenovo is competing on cost efficiency and scalability, especially outside North America. It is less distinct at the architecture level but is becoming more relevant in large-scale deployments.

Everpure (Pure Storage)

Everpure focused on three areas: performance validation, pipeline automation, and consumption models in its announcements:

- FlashBlade//EXA Positioning for AI: High-throughput file system optimized for GPU workloads. Vendor claims include high GPU utilization and strong benchmark results, including SPEC AI benchmarks.

- Evergreen//One for AI: A consumption model tied to GPU usage and AI workloads, not just storage capacity. Intended to align infrastructure cost with AI output.

- Data Stream (New Offering): A pipeline automation layer that handles data ingestion, transformation, and vectorization. Built with NVIDIA GPU acceleration.

Everpure also aligned with NVIDIA STX, supporting NVIDIA’s architecture for context-aware inference workloads.

Assessment: Everpure is shifting from a performance-focused storage vendor to a data pipeline infrastructure provider. The strategic opportunity is significant.

NetApp

NetApp’s primary announcement was AI Data Engine (AIDE), bolstering its metadata capabilities for AI infrastructure:

AIDE introduces a global metadata catalog that continuously analyzes and enriches data, semantic indexing of unstructured datasets, and integration with existing ONTAP and StorageGRID deployments.

NetApp also aligned with NVIDIA STX architecture and Cisco AI POD / FlexPod AI.

Unlike many competitors, NetApp is not focusing on raw throughput or GPU feeding speed. Instead, it is addressing data discoverability, governance, and lifecycle management.

Assessment: NetApp’s approach is distinct, focusing on data preparation and governance issues, which still slow down enterprise AI. It does this while remaining focused on multi-tiered enterprise storage.

WEKA

WEKA made one of the more technically significant announcements extending its platform into memory-tier infrastructure:

- NeuralMesh AI Data Platform: General availability of NeuralMesh AI Data Platform with appliance-style deployment aligned with NVIDIA AI Data Platform. Designed to accelerate time-to-production.

- Augmented Memory Grid with NVIDIA STX: Integration of Augmented Memory Grid with NVIDIA STX extends GPU memory using distributed storage and enables persistent KV cache and context memory.

WEKA claims up to 6.5x increase in token throughput and significant improvements in read/write bandwidth for inference workloads.

Assessment: WEKA is expanding from storage into AI memory infrastructure, aligning with the trend toward inference-intensive workloads.

VAST Data

VAST focused on making AI pipelines deployable, rather than introducing new infrastructure components:

- Foundation Stacks: an open-source implementation of NVIDIA AI Blueprints, with pre-integrated pipelines running on VAST AI OS. These stacks include data ingestion, processing, vectorization, as well as query and inference workflows.

VAST also highlighted integration with NVIDIA AI Data Platform and collaboration with partners like Cisco and Supermicro.

Assessment: VAST is establishing itself as a data platform layer rather than a storage vendor. Its competitive edge focuses on simplifying pipelines instead of boosting raw throughput.

MinIO

MinIO made a compelling move, integrating object storage directly into the inference path:

- AIStor Support for NVIDIA STX Architecture: Key features include running object storage services directly on BlueField DPUs, support for GPUDirect RDMA with S3-compatible storage (tech preview), eliminating host CPU involvement in the data path, and high-throughput object storage integrated into inference pipelines.

MinIO positions this as a scalable backend for NVIDIA CMX and a unified object layer for training, inference, and RAG.

Assessment: MinIO is redefining object storage as a performance layer, not just capacity. This is strategically important but requires significant changes in how enterprises deploy object storage.

Cisco

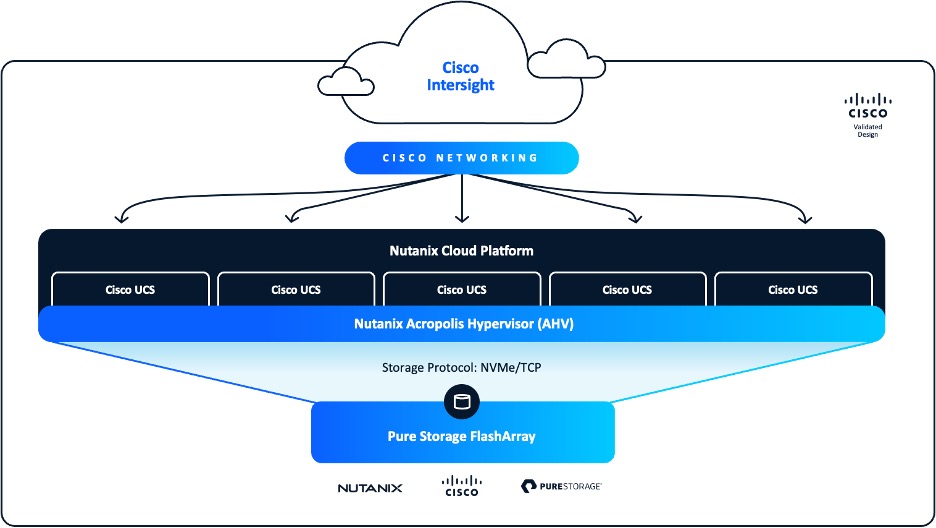

Cisco expanded its Secure AI Factory with NVIDIA, making security as a core architectural layer:

- Integration of security controls across the AI stack including model-level protection, runtime security, and network segmentation.

- Extension to edge deployments. New Spectrum-based switching platforms. Integration with Splunk for observability.

- Policy enforcement at the DPU level (BlueField) and controls for agentic AI systems.

Assessment: Cisco isn’t competing in compute. Instead, it is establishing a unique role as the control plane for AI infrastructure, focusing on security, observability, and policy enforcement as its main value propositions.

IBM

IBM focused on enterprise integration and data acceleration:

- watsonx.data integration with NVIDIA GPU, demonstrating improvements in query performance and cost efficiency.

- Blackwell GPUs now on IBM Cloud.

- Storage Scale expanded for AI workloads.

Assessment: IBM operates above the infrastructure layer, concentrating on data and workflow orchestration. Its strength lies in regulated environments rather than raw infrastructure performance, making it a compelling enterprise play.

Impact Analysis

Practitioners: Operational Benefits and Costs

From a practitioner perspective, these announcements reduce uncertainty but do not eliminate complexity.

The primary benefit is standardization. Organizations can now deploy AI infrastructure using well-defined patterns rather than designing systems from scratch. This reduces design risk, integration time, and deployment variability.

However, costs and challenges remain substantial:

- Infrastructure Costs Continue to Rise: GPU pricing, power and cooling requirements, and networking upgrades all contribute to rising infrastructure costs.

- Operational complexity rises: multi-layered architectures, integration across compute, storage, and security, and the need for specialized expertise all add to operational challenges.

The introduction of new concepts such as context memory, STX architectures, and GPU-direct storage adds extra layers of complexity that IT teams will need to understand and evaluate.

Conclusion

GTC 2026 showed the industry shifting towards the realities of bringing AI infrastructure into the enterprise, with NVIDIA and its ecosystem partners building a more structured and predictable AI infrastructure. Vendors have aligned around NVIDIA-defined architectures, reducing fragmentation and accelerating deployment timelines.

At the same time, the locus of innovation has shifted. NVIDIA has compressed and standardized much of how infrastructure providers have traditionally differentiated. This brings stability to enterprise AI practitioners (and widens NVIDIA’s competitive moat), but it also forces storage and server providers to adapt to a new market reality

Competitive differentiation now depends on how well an AI factory can efficiently process data, keep GPU utilization high, ensure operational control and security, and address real-world enterprise constraints.

For buyers, this results in a more accessible yet more detailed decision-making process. Infrastructure selection is no longer about just assembling components. It’s about choosing a platform that fits with operational needs, data strategy, and long-term growth.

Key Takeaway: The industry has moved from experimentation to repeatable infrastructure patterns, enabling organizations to deploy AI systems with greater confidence and speed. Those organizations that understand the interplay between compute, data, and operations will be best positioned to translate these infrastructure advances into sustained business value.