Research Note: Qualcomm Introduces AI200 & AI250 for Data Center Inference

Qualcomm Technologies recently announced two data center inference accelerators, the AI200 and AI250, targeting commercial availability in 2026 and 2027, respectively. The products are Qualcomm’s first strategic push into rack-scale AI inference.

NAND Insider Newsletter: Week of May 12, 2025

Each week NAND Research puts out a newsletter for our industry customers taking a look at what’s driving the week, and what happened last week that caught our attention. Below is a excerpt from this week’s, May 10, 2025.

NAND Insider Newsletter: April 21 2025

Each week NAND Research puts out a newsletter for our industry customers taking a look at what’s driving the week, and what happened last week that caught our attention. Below is a excerpt from this week’s, April 21, 2025.

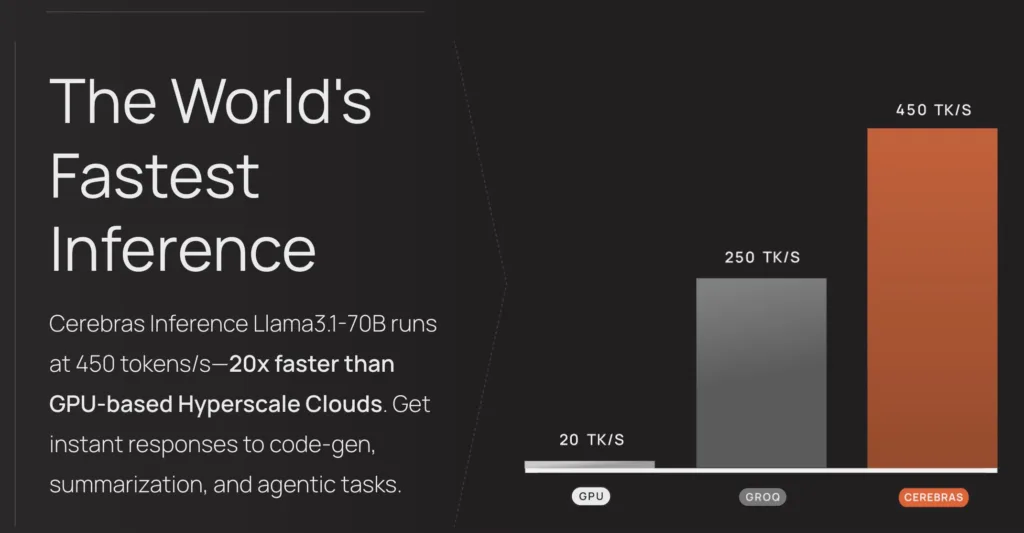

Research Note: Cerebras Inference Service

Cerebras Systems recently introduced Cerebras Inference, a high-performance AI inference service that delivers exceptional speed and affordability. The new service achieves 1,800 tokens per second for Meta’s Llama 3.1 8B model and 450 tokens per second for the 70B model, which Cerebras says makes it 20 times faster than NVIDIA GPU-based alternatives.