Research Note: Improving Inference with NVIDIA’s ‘CMX’ Inference Context Memory Storage Platform

At NVIDIA Live at CES 2026, NVIDIA introduced its Inference Context Memory Storage (ICMS) platform as part of its Rubin AI infrastructure architecture. NVIDIA’s ICMS addresses KV cache scaling challenges in LLM inference workloads.

The technology targets a specific gap in existing memory hierarchies where GPU high-bandwidth memory proves too limited for growing context requirements while general-purpose network storage introduces latency and power consumption penalties that degrade inference efficiency.

SC25: Beyond Super Computing

Supercomputing 2025 delivered a clear message to enterprise IT leaders: the infrastructure conversation has fundamentally changed. The announcements from SC25 were about architectural transformation.

From rack-scale designs to quantum integration to facility-level engineering, the building blocks of large-scale AI and HPC systems are being reimagined.

Research Note: VDURA Data Platform v12

VDURA recently announced Version 12 of its VDURA Data Platform (VDP), formerly known as PanFS, introducing three primary architectural enhancements to its parallel file system: an elastic Metadata Engine for distributed metadata processing, system-wide snapshot capabilities, and native support for SMR disk drives.

NAND Insider Newsletter: March 24, 2025

Every week NAND Research puts out a newsletter for our industry customers taking a look at what’s driving the week, and what happened last week that caught our attention. Below is a excerpt from this week’s, March 24, 2025.

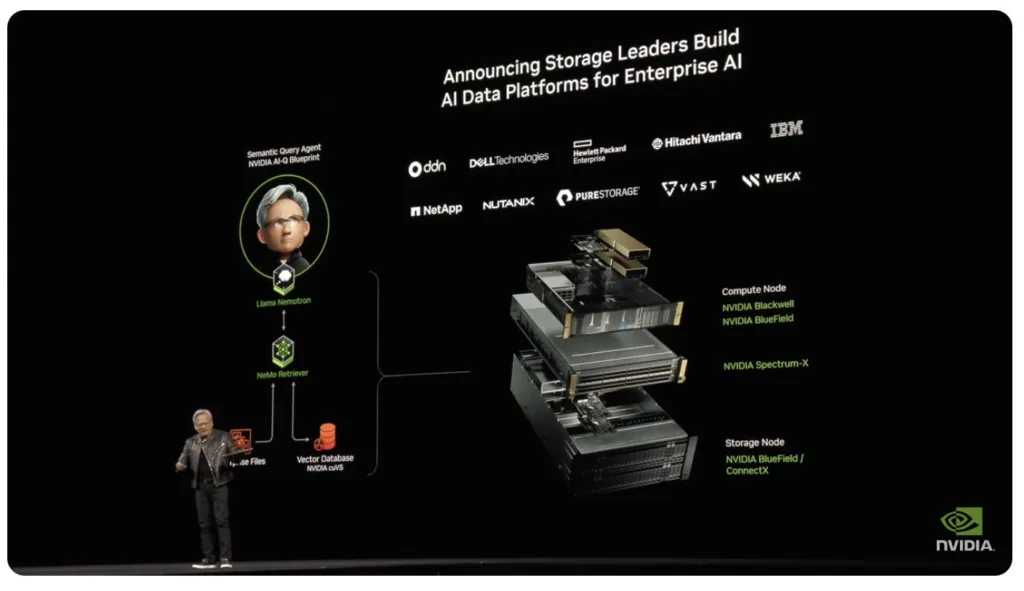

Research Note: NVIDIA AI Storage Certifications & AI Data Platform

At its annual GTC event in San Jose, NVIDIA announced an expansion of its NVIDIA-Certified Systems program, including the new NVIDIA AI Data Platform reference design, to include enterprise storage certification to help streamline AI factory deployments.

NAND Insider Newsletter: January 12, 2025

Every week NAND Research puts out a newsletter for our industry customers. Below is a excerpt from this week’s.

SC24: Shaping the Future of IT with High-Performance Computing and AI

Last week’s Supercomputing 2024 (SC24) conference in Atlanta brought together IT leaders, researchers, and industry innovators to unveil advancements in HPC and AI, with even a little quantum computing thrown in.