Every quarter I participate in a call for buy-side investment analysts focused on the broader datacenter/hyperscaler semiconductor ecosystem

Here, I’m sharing the raw notes I used to drive the Q1 2026 call (it’s my cheat sheet).

(If you’d like more information about how to participate, feel free to email us at [email protected].)

Q1 Key Announcements (all vendors)

| Company | Deal | Party | Value / Scale | Details |

| AMD | AI chip supply agreement | Meta | ~$60B–$100B potential | Multi-year deal to deploy 6 GW of AMD Instinct GPUs across Meta AI infrastructure. Includes a custom MI450 GPU and Helios rack architecture with EPYC CPUs. |

| AMD | Strategic chip supply + equity option | Meta | Up to 10% AMD equity via warrants | Meta can receive up to 160M AMD shares tied to GPU shipment milestones. |

| AMD | AI chip supply agreement | OpenAI | Multi-year (value undisclosed) | OpenAI agreed to purchase 6 GW of AMD AI processors over several years, with a similar warrant structure. |

| Broadcom | Custom AI accelerator design program | Anthropic | Multi-gigawatt deployments | Broadcom expects to supply 1 GW of custom chips in 2026 scaling to 3 GW by 2027. |

| Broadcom | Custom AI chip design partnerships | Alphabet, Microsoft, Amazon, Meta | Multi-year programs | Broadcom designs custom AI processors for multiple hyperscalers. |

| Broadcom | AI chip development partnership | OpenAI | Production expected ~2027 | OpenAI is working with Broadcom on its first in-house AI accelerator. |

| NVIDIA | Strategic ecosystem investment | Lumentum & Coherent | ~$4B total investment | NVIDIA investing $2B each in optical networking companies to accelerate photonic interconnects for AI chips. |

| NVIDIA | Large AI chip supply deal | OpenAI | Part of broader ~$100B AI investment ecosystem | NVIDIA expected to supply AI processors and potentially invest in OpenAI infrastructure. |

| Marvell | Custom ASIC and networking supply | Amazon, Microsoft (reported by analysts) | Multi-year infrastructure demand | Marvell supplies custom chips and networking silicon used with Trainium and Maia AI systems. |

| Qualcomm | Data-center AI accelerator push | Cloud providers / AI infrastructure vendors | Product launch cycle 2026 | Qualcomm introduced AI200 / AI250 inference accelerators targeting data-center AI workloads. |

| MediaTek | Edge-AI and telecom AI partnerships | Telecom operators and device makers | Strategic expansion | MediaTek expanding AI chips across 6G infrastructure, edge AI, and automotive platforms. |

Semi Company Detail

AMD

| Category | Event / Announcement | Product / Technology | Key Customers / Partners | Notes |

| Major hyperscaler deal | Multi-year 6-gigawatt AI infrastructure agreement | Instinct GPUs + EPYC CPUs | Meta | AMD will supply up to 6 GW of Instinct GPUs and EPYC CPUs for Meta’s AI data centers, with first deployments starting in 2H 2026. |

| Financial structure of Meta deal | Partnership includes equity warrants | Instinct GPU roadmap | Meta | Meta receives warrants that could convert to ~10% of AMD, aligning incentives with AMD’s AI growth. |

| Earlier hyperscaler deal (still impacting 2026) | Multi-year AI chip supply agreement | Instinct GPUs | OpenAI | OpenAI signed a similar 6 GW supply agreement, positioning AMD as a second supplier to major AI labs. |

| Rack-scale AI platform launch | AMD unveiled Helios rack-scale architecture | Helios AI rack | Meta (OCP) | AMD’s first rack-scale system competes directly with NVIDIA’s NVL systems and hyperscaler racks. |

| Next-gen GPU roadmap | AMD previewed MI400-series accelerators | Instinct MI430X / MI440X / MI455X | Cloud providers & hyperscalers | The MI400 family targets next-generation AI training and inference infrastructure. |

| AI supercluster deployments | Large AI clusters planned using AMD GPUs | Instinct MI450 + Helios racks | Oracle Cloud | Oracle is building AI superclusters with ~50,000 Instinct GPUs. |

| Next-generation CPU roadmap | EPYC “Venice” server CPUs announced | Zen-based EPYC processors | Hyperscalers and AI data centers | Venice CPUs will pair with MI450 GPUs in AI racks. |

Broadcom

| Category | Event / Announcement | Product / Technology | Key Customers / Partners | Notes |

| Explosive AI revenue growth | AI semiconductor revenue more than doubled year-over-year to $8.4B in Q1 FY2026 | Custom AI ASICs + networking silicon | Hyperscalers and AI labs | AI demand is now the primary driver of Broadcom’s semiconductor business, fueling 29% revenue growth to $19.3B. |

| Massive AI chip forecast | Broadcom projected over $100B in AI chip revenue by 2027 | Custom XPUs | Hyperscalers including Google, Microsoft, Amazon, Meta | |

| Hyperscaler ASIC programs expanding | Broadcom confirmed six major AI chip customers | Custom AI accelerators | Google, Meta, OpenAI, Anthropic, others | |

| Anthropic deployment | Plans to supply 1 GW of AI chips in 2026, scaling to 3 GW by 2027 | Custom AI ASICs | Anthropic | |

| Advanced chip-stacking technology | Broadcom developing 3D-stacked AI chips, targeting 1M units shipped by 2027 | Advanced packaging / chip stacking | Fujitsu and other AI customers | Improves bandwidth and power efficiency for AI workloads, enabling larger accelerators. |

| Networking leadership for AI clusters | Continued growth of Tomahawk Ethernet switches and SerDes technologies | AI data-center networking | Hyperscalers | |

| Next-gen switching roadmap | Development of 1.6T–3.2T networking and terabit switching | DC switching silicon | Cloud and hyperscale infrastructure providers | Next-generation AI clusters will require major upgrades to data-center networking, strengthening Broadcom’s position. |

| VMware integration progress | VMware Cloud Foundation becoming a major enterprise software platform | Infrastructure software | Enterprises and cloud providers | VMware revenue and subscriptions continue to grow as Broadcom integrates the platform into its enterprise strategy. |

| Capital allocation | Announced $10B share buyback program alongside strong earnings | Corporate finance | Investors | Confidence in long-term AI demand and cash flow. |

Intel

| Category | News Item | Details | Notes |

| AI accelerator roadmap | Falcon Shores canceled | Intel decided not to commercialize the Falcon Shores AI GPU and will use it as a development platform for future architectures. | A reset of Intel’s AI accelerator strategy after delays and competitive pressure. |

| New AI GPU | Crescent Island inference GPU | Intel unveiled a new AI inference-focused GPU based on Xe architecture with large memory capacity for enterprise servers. | Intel is pivoting toward cost-efficient inference workloads, a massive market expected to outgrow training. |

| AI partnerships | Technical collaboration with SambaNova | Intel announced a multi-year partnership with SambaNova Systems to advance AI infrastructure. | Is Intel leaning into AI startups to strengthen its ecosystem? |

| Client AI chips | Core Ultra Series 3 launch | Intel introduced processors for AI PCs built on its 18A manufacturing node. | Shows Intel’s push to lead the AI PC market, where it has stronger OEM relationships. |

| Next-gen AI architecture | Jaguar Shores roadmap | Intel is developing a future AI platform called Jaguar Shores, expected to follow Gaudi accelerators. | Intel’s longer-term attempt to build rack-scale AI systems. |

| AI accelerator deployment | Gaudi ecosystem expansion | Gaudi accelerators are available in systems from vendors like Dell, HPE, Lenovo, and Supermicro. | Intel’s main foothold in data-center AI currently remains Gaudi accelerators. |

| Data-center CPU roadmap | Clearwater Forest Xeon delay | Intel pushed back its next-generation Xeon server processor to 1H 2026 due to packaging challenges. | Ongoing manufacturing and packaging challenges |

Marvell

| Category | Event / Announcement | Product / Technology | Key Customers / Partners | Notes |

| Major acquisition (AI connectivity) | Completed $3.25B acquisition of Celestial AI | Photonic Fabric optical interconnect | AI cloud providers, hyperscalers | Expands Marvell’s ability to build optical interconnects for large AI clusters, improving bandwidth and power efficiency in data-center fabrics. |

| Optical interconnect strategy | Celestial AI technology integrated into Marvell’s data-center portfolio | Silicon photonics + chip-to-chip optical links | Hyperscale AI data centers | Photonic Fabric enables high-bandwidth, low-latency connectivity between AI accelerators |

| Networking acquisition | Announced $540M acquisition of XConn Technologies | CXL switching and AI chip interconnect | AI infrastructure vendors | XConn’s technology enables AI accelerator pooling and memory disaggregation using CXL fabrics. |

| Financial outlook tied to AI | Custom AI chips expected to drive 20%+ growth in ASIC revenue | AI ASICs | AWS and hyperscalers | Demand for Trainium-related silicon is expected to drive strong revenue growth in 2026. |

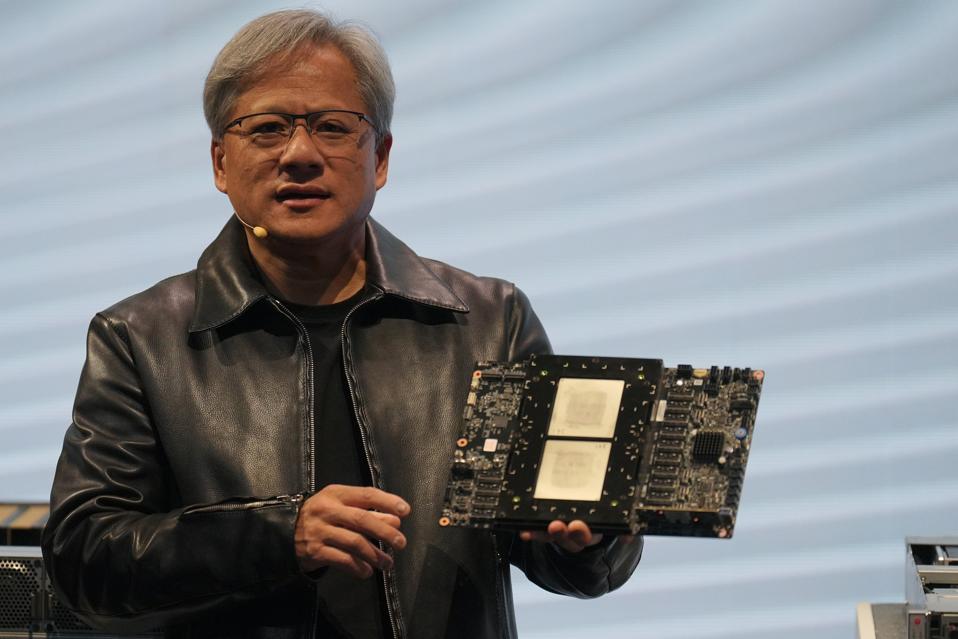

NVIDIA

| Category | Event / Announcement | Product / Technology | Key Customers / Partners | Notes |

| Next-gen AI platform launch | NVIDIA unveiled the Rubin AI platform, successor to Blackwell | Rubin GPU + Vera CPU + NVLink 6 fabric | Hyperscalers, AI labs | Rubin is a six-chip architecture designed for AI factories, integrating CPU, GPU, networking, and memory into a single rack-scale system. |

| Rack-scale AI architecture | Introduction of NVL72 rack systems built on Rubin superchips | Vera Rubin NVL72 | Hyperscalers and AI infrastructure providers | |

| Software ecosystem expansion | Expanded collaboration to optimize enterprise AI stack | CUDA + AI software stack | Red Hat | Joint effort integrates Rubin with Red Hat Enterprise Linux, OpenShift, and hybrid-cloud AI tooling. |

| AI infrastructure investment | NVIDIA committed $4B to photonics companies | Optical networking for AI clusters | Lumentum, Coherent | Investments to accelerate optical interconnects critical for scaling AI clusters and reducing networking bottlenecks. |

| Strategic optics partnerships | Multiyear agreements for advanced optical networking technology | Silicon photonics, optical transceivers | Lumentum, Coherent | Agreements include multibillion-dollar purchase commitments and capacity guarantees |

| AI-native telecom ecosystem | NVIDIA joined telecom leaders to build AI-native 6G infrastructure | AI-RAN platforms | Nokia, Cisco, Deutsche Telekom, SK Telecom, others | Expands NVIDIA GPUs into telecom networks as AI-accelerated radio infrastructure. |

| Networking architecture expansion | NVLink ecosystem expanding with CPU partners | NVLink Fusion interconnect | Intel, Fujitsu, Arm ecosystem | Allows CPU vendors to integrate NVLink, strengthening NVIDIA’s GPU-centric compute ecosystem. |

| Competitive pressure from custom silicon | Hyperscalers expanding in-house AI chips | Custom ASICs | Amazon, Google, Microsoft, Meta | Growing competition from custom silicon is pushing NVIDIA to strengthen system-level differentiation. |

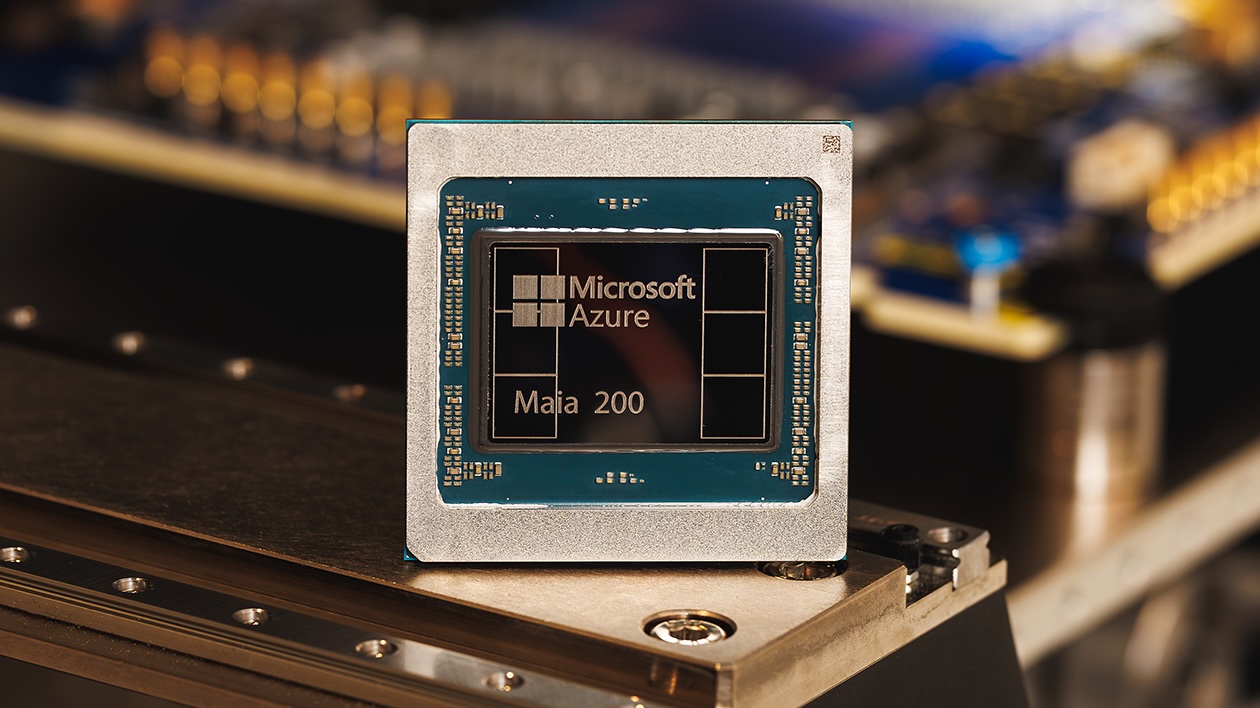

Hyperscaler ASIC Business

| Company | AI Chip | Primary Use | Key Silicon Partner(s) |

| TPU | Training + inference | Broadcom + Marvell + TSMC | |

| Meta | MTIA ( | Recommenders + generative AI | Broadcom + TSMC |

| Microsoft | Maia | Azure AI infrastructure | Marvell + TSMC |

| Amazon (AWS) | Trainium + Inferentia | Training (Trainium) + inference (Inferentia) | Annapurna Labs + TSMC |

| ByteDance | Custom XPU (unnamed publicly) | Training + inference | Broadcom (rumored) |

| OpenAI | Custom accelerator (Titan / internal project) | Frontier model training + inference | Broadcom |

Google TPU

TPU v7 “Ironwood”

- Ironwood is Google’s seventh-generation TPU—most performant and scalable custom AI accelerator to date, first designed specifically for inference

Production Volume & Manufacturing

- Fubon projects total TPU v7 racks will reach about 36,000 units in 2026

- Total TPU production estimated at 3.1 to 3.2 million units in 2026, constrained mainly by advanced packaging capacity

- In January 2026, Google confirmed that its custom-designed TPUs have outshipped general-purpose GPUs in volume for the first time

Partnership Structure Evolution

- Google is teaming with MediaTek and Broadcom for Ironwood—spreading cost and production risk

- MediaTek handles I/O and manufacturing coordination with TSMC; Broadcom continues developing high-performance cores

- Google selected MediaTek partially because the company offers costs approximately 20–30% lower than alternative partners

- MediaTek focuses on cost-sensitive variants such as the TPUv7e, with production scheduled to begin in 2026

Selling TPUs

- Anthropic has access to as many as 1 million Google TPUs, expected to bring over a gigawatt of new AI compute capacity online in 2026

- Anthropic has committed $21 billion to custom chip orders via Broadcom, securing nearly 1 million Google TPU v7p units for delivery by late 2026

- Meta signed a multi-billion-dollar TPU lease agreement to access chips via Google Cloud for training AI models

- Meta is separately in talks to purchase TPUs outright for deployment in its own data centers starting in 2027

TCO Advantage vs. NVIDIA

- From Google’s perspective, all-in TCO per Ironwood chip for full 3D Torus configuration is ~44% lower than the TCO of a GB200 server

- Even when offering prices to external customers, TPU v7’s TCO is about 30% lower than NVIDIA’s GB200 and about 41% lower than the upcoming GB300

OpenAI “Titan”

Partnership Details

- OpenAI and Broadcom collaboration covers 10 gigawatts of custom AI accelerators

- OpenAI designs the accelerators and systems; Broadcom develops and deploys

- Deployments targeted to start in 2H 2026, completing by end of 2029

- Systems will use Ethernet-based networking

Chip Specifications

- OpenAI’s chip codenamed “Titan” will use TSMC’s N3 process, mass production expected end of 2026

- Second-generation “Titan 2” reportedly planned on more advanced A16 process

Revenue Timeline for Broadcom

- Broadcom CEO Hock Tan told investors he doesn’t expect much revenue from the OpenAI partnership in 2026

- “I appreciate the fact that it is a multiyear journey that will run through 2029”

- Internal push to roll the chip out in Q2 2026 has already slipped to Q3 at the earliest

Meta MTIA

Current Status

- Meta accelerated deployment of MTIA v3, codenamed “Iris”, 3rd generation custom silicon

- As of February 2026, Iris chips have moved into broad deployment across Meta’s massive data center fleet

- Flagship Iris was designed primarily with assistance from Broadcom

- Fabricated on TSMC’s cutting-edge 3nm process, integrates eight HBM3E 12-high memory stacks with bandwidth exceeding 3.5 TB/s

Setbacks in Training Chip Program

- Meta scrapped an advanced AI training chip after design roadblocks

- The MTIA program has had a history of setbacks, including scrapping an earlier inference chip after it underperformed

- Meta announced a multiyear agreement with AMD on February 24 worth more than $100 billion for MI450 GPUs

2026 Roadmap

- MTIA-2 already in production, slated to debut in H1 2026, built on TSMC’s 3nm with CoWoS-S packaging

- MTIA-3 set for H2 2026 debut, with GUC handling back-end packaging

- MTIA v4 “Santa Barbara” readying for latter half of 2026 with HBM4 memory and liquid-cooling systems exceeding 180kW per rack

- Arke inference-only chip variant developed in collaboration with Marvell (not Broadcom)—representing supply chain diversification

Partner Diversification Risk

- Meta co-developed its MTIA chips with Broadcom, but is reportedly diversifying with Marvell for certain variants

- Meta has committed up to $135 billion in capital expenditures for 2026 to build out AI infrastructure