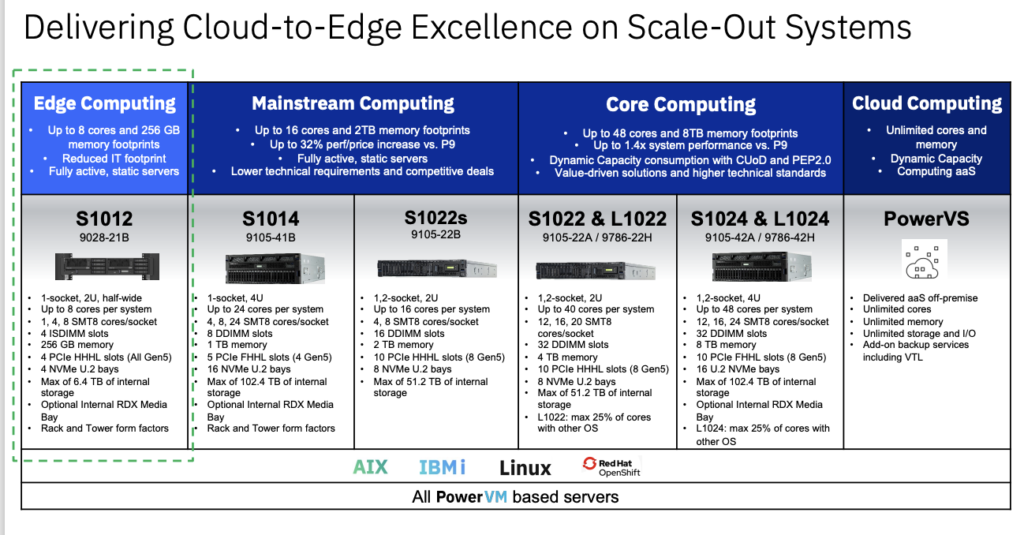

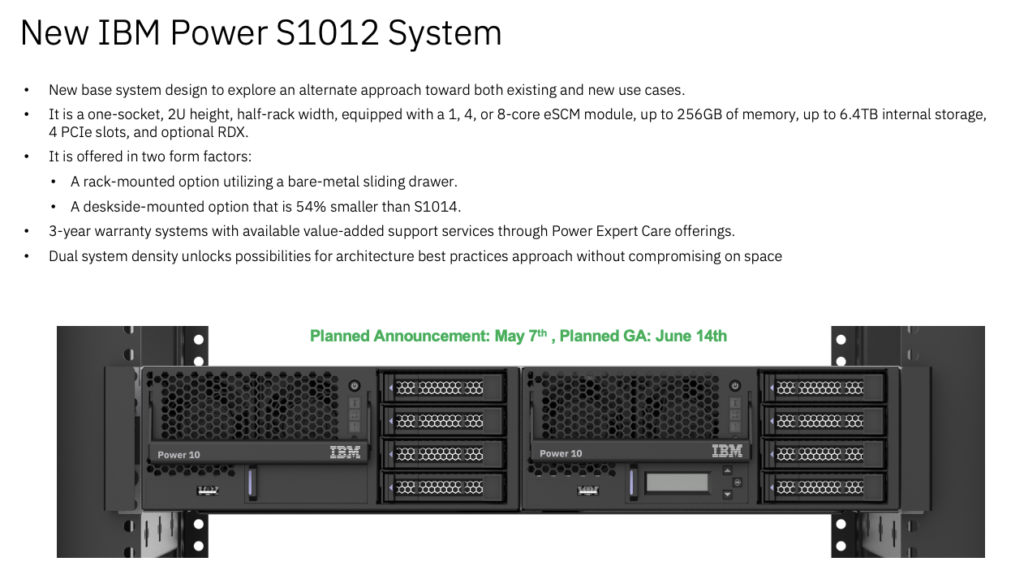

IBM expanded its server portfolio with the introduction of its new IBM Power S1012. Based on IBM’s Power10 processor, the new server offers up to three times more performance per core compared to its predecessor, the Power S812, in a 1-socker half-width form factor.

The new solution is designed for AI inferencing across diverse environments—from core data centers to cloud and edge locations:

- Processor and Performance: The server is equipped with IBM’s Power10 processor, configured in a 1-socket, half-width setup. This allows the Power S1012 to deliver up to three times the performance per core compared to its predecessor, the Power S812.

- AI Inferencing at the Edge: The Power S1012 is “optimized” for AI workloads, with IBM targeting the new offering at AI inferencing at the edge.

- Security Features: The server includes four Matrix Math Accelerators per core, enhancing its ability to perform complex calculations quickly. Additionally, it employs transparent memory encryption to protect data in transit and at rest, safeguarding sensitive information against unauthorized access and leaks.

- Form Factor: It is available in both a 2U rack-mounted and a tower deskside form factor.

- Remote Management and Reliability: Advanced remote management features are integrated into the Power S1012, complemented by high-availability features, including redundant hardware and failover mechanisms.

- Efficiency and Space Saving: The server’s 2U half-wide design allows organizations to reduce the physical footprint of their IT infrastructure by up to 75% compared to the Power S1014 4U rack server without sacrificing performance.

- Market and Client Focus: The Power S1012 is targeted at small to midsize organizations.

The Power S1012 will be generally available from June 14, 2024, purchasable through IBM directly or via certified Business Partners.

Analysis

IBM’s Power S1012 meets a critical emerging need of modern enterprises: supporting advanced applications at the edge with a secure, powerful, and economically viable platform. This launch strengthens IBM’s competitive edge in a market increasingly prioritizing decentralization, real-time processing, and AI-driven insights. This is an excellent offering for IBM’s Power customers.