Call Notes: Q4 Semiconductor Tracker

We’re in a moment that’s less about individual chip performance and more about processing-at-scale. It’s all about sprawling AI racks, optics, interconnect, and custom silicon hungry for scale, speed, and a story.

NAND Insider Newsletter: February 4, 2025

Every week NAND Research puts out a newsletter for our industry customers. Below is a excerpt from this week’s, February 4, 2025.

NAND Insider Newsletter: January 21, 2025

Every week NAND Research puts out a newsletter for our industry customers. Below is an excerpt from this week’s.

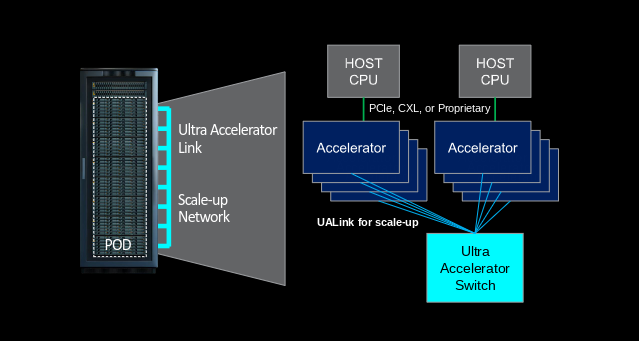

Research Note: UALink Consortium Expands Board, adds Apple, Alibaba Cloud & Synopsys

The Ultra Accelerator Link Consortium (UALink), an industry organization taking a collaborative approach to advance high-speed interconnect standards for next-generation AI workloads, announced an expansion to its Board of Directors, welcoming Alibaba Cloud, Apple, and Synopsys – joining existing member companies like AMD, AWS, Cisco, Google, HPE, Intel, Meta, and Microsoft.

Quick Take: Apple’s OpenELM Small Language Model

Apple this week unveiled its OpenELM, a set of small AI language models designed to run on local devices like smartphones rather than rely on cloud-based data centers. This reflects a growing trend toward smaller, more efficient AI models that can operate on consumer devices without significant computational resources.