Call Notes: Quarterly Semiconductor Update (March 2026)

Every quarter I participate in a call for buy-side investment analysts focused on the broader datacenter/hyperscaler semiconductor ecosystem. Here, I’m sharing the raw notes I used to drive that call.

Call Notes: Compute Hardware & Semiconductor Ecosystem

Every quarter I participate in a call for buy-side investment analysts focused on compute hardware and the broader semiconductor ecosystem. Here, I’m sharing the raw notes from that call.

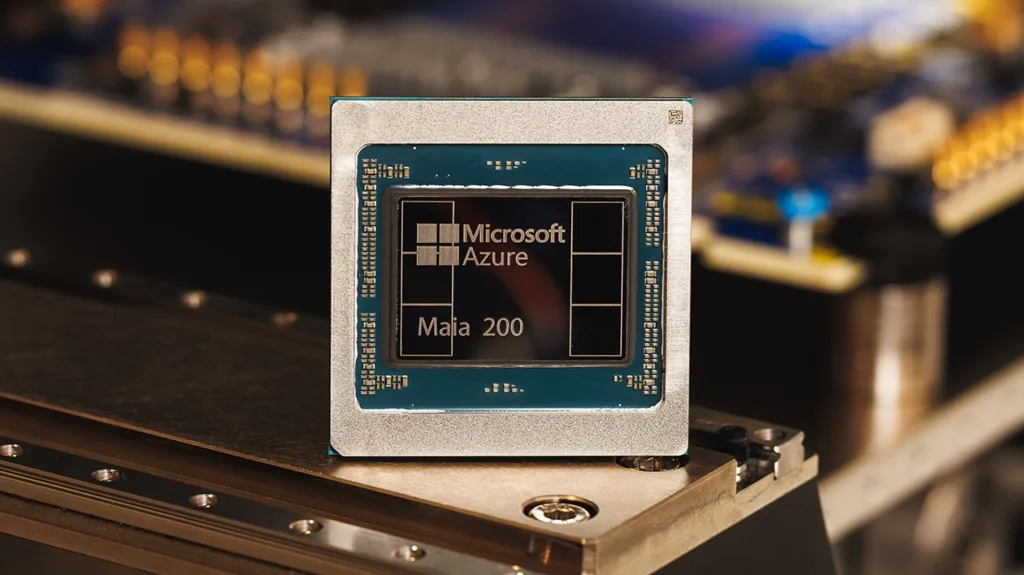

Research Note: Microsoft Azure Maia 200 Inference Accelerator

Microsoft recently announced its second-generation custom AI accelerator, the Maia 200. The new chip is an inference-optimized alternative to third-party GPUs in its Azure infrastructure. The company says the accelerator delivers 30% better performance per dollar than existing Azure hardware while supporting OpenAI’s GPT-5.2 models and Microsoft’s own synthetic data generation workloads.

Call Notes: Q4 Semiconductor Tracker

We’re in a moment that’s less about individual chip performance and more about processing-at-scale. It’s all about sprawling AI racks, optics, interconnect, and custom silicon hungry for scale, speed, and a story.

NAND Insider Newsletter: January 21, 2025

Every week NAND Research puts out a newsletter for our industry customers. Below is an excerpt from this week’s.

NAND Insider Newsletter: January 5, 2025

Every week NAND Research puts out a newsletter for our industry customers. Below is a excerpt from this week’s.