Memory & Flash Crisis Update (March 2026)

The global memory market entered 2026 in a state of structural supply constraint. AI infrastructure demand has reallocated semiconductor manufacturing capacity toward HBM for GPU accelerators, creating scarcity in conventional DRAM and NAND flash products.

How to Think about VAST Data

If you’ve been tracking the enterprise infrastructure space for the past few years, you’ve probably encountered VAST Data. And if you’re like most IT practitioners I talk to, you’ve probably filed them in the “high-performance storage vendor” folder in your brain.

Everpure: Pure Storage’s Rebrand & Evolution to Data Management Platform

Pure Storage has rebranded as Everpure, reflecting a multi-year evolution from storage management into broader data management, and is also acquiring 1touch: Executive Summary Pure Storage today rebranded as Everpure, matching its ongoing expansion from its roots in performance flash storage into the broader data management market. The transition is accompanied by its intent to […]

SUSE Acquires Losant: Extending the Open Edge Stack into Industrial IoT

SUSE announced the acquisition of Losant, a Cincinnati-based industrial IoT (IIoT) platform. The acquisition marks a material expansion for SUSE, moving the company from being primarily an edge infrastructure provider (built around SUSE Linux Micro and K3s) to the application and orchestration layer, where operational data from industrial devices is aggregated, visualized, and acted on.

Dell Expands Private Cloud Portfolio with Nutanix AHV Support

Dell Technologies recently announced the expansion of its Dell Private Cloud offering to support Nutanix AHV as a third hypervisor option, complementing existing support for VMware vSphere and Red Hat OpenShift Virtualization.

IBM FlashSystem: Next Generation, Autonomous Storage Meets Agentic AI

IBM recently announced a significant refresh of its FlashSystem all-flash storage portfolio, replacing the 5300, 7300, and 9500 product lines with new 5600, 7600, and 9600 models. The company also introduced its new FlashSystem.ai, an agentic AI administration layer that IBM claims can reduce manual storage management effort by up to 90%.

Verizon’s Pivot Opens the Door: Why Private 5G Pioneers Are Prime M&A Targets in 2026

Real, paying customers are driving demand for edge inference in manufacturing and logistics, and Verizon is backing that with One Fiber.

Why Cisco Builds Silicon for the Data Center but Buys It for Wi‑Fi

Two domains. Two silicons. One intentional strategy.

The Carrier Pivot: What Verizon’s AI Strategy Signals for the Next Phase of Private 5G

What stands out is Verizon’s insistence that this is not speculative infrastructure building.

Call Notes: Compute Hardware & Semiconductor Ecosystem

Every quarter I participate in a call for buy-side investment analysts focused on compute hardware and the broader semiconductor ecosystem. Here, I’m sharing the raw notes from that call.

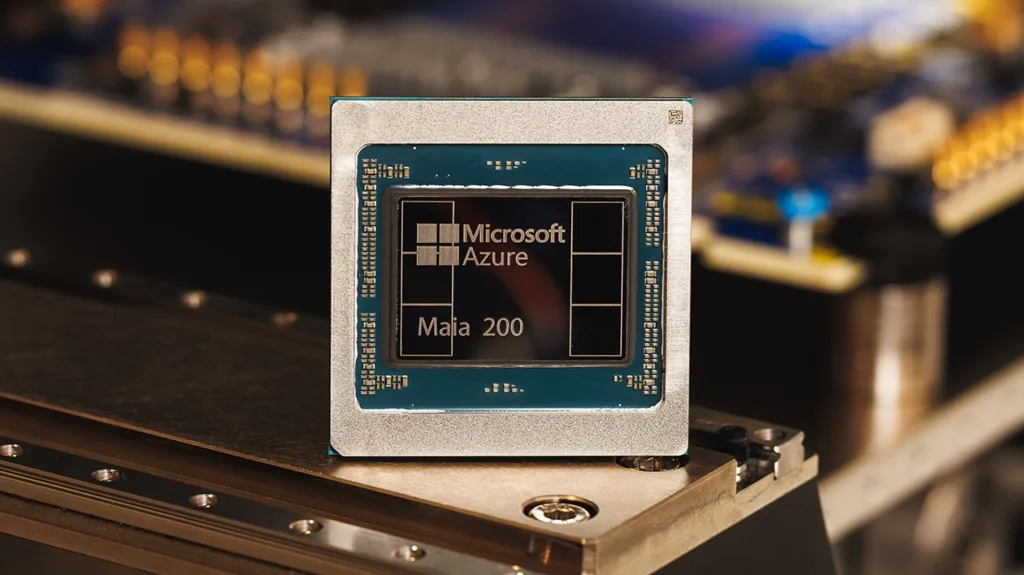

Research Note: Microsoft Azure Maia 200 Inference Accelerator

Microsoft recently announced its second-generation custom AI accelerator, the Maia 200. The new chip is an inference-optimized alternative to third-party GPUs in its Azure infrastructure. The company says the accelerator delivers 30% better performance per dollar than existing Azure hardware while supporting OpenAI’s GPT-5.2 models and Microsoft’s own synthetic data generation workloads.

Research Note: Commvault Unified Data Vault, S3-Compatible Protection for Modern Workloads

Commvault recently announced its Commvault Cloud Unified Data Vault, a cloud-native service that extends its air-gapped protection capabilities to data written using the S3 protocol. The service provides an S3-compatible endpoint that applies policy-driven protection to S3-based workloads without requiring agent installation or custom integration work.

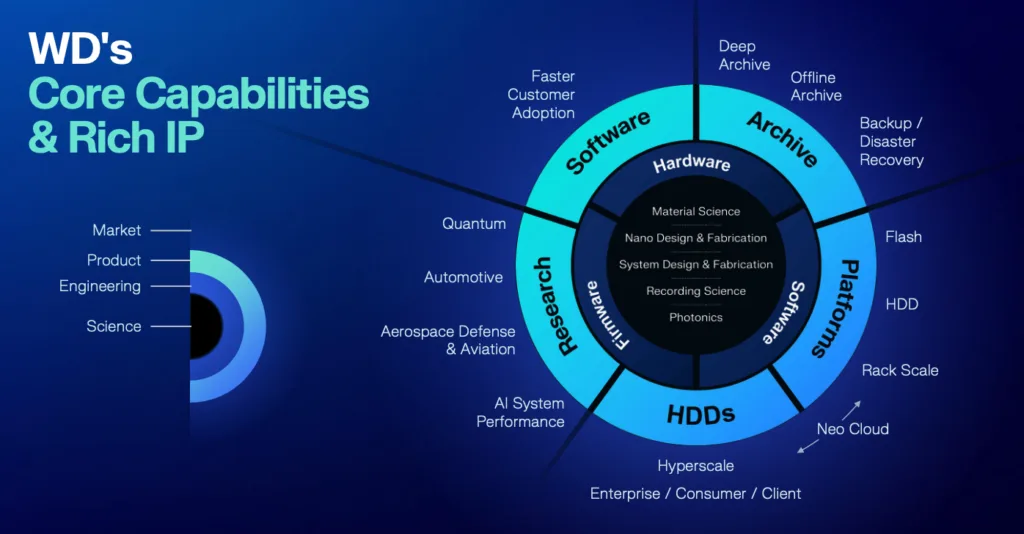

Research Note: WD Innovation Day

Western Digital’s February 2026 Innovation Day showed a company fundamentally transformed from its legacy PC-centric storage roots into a critical AI infrastructure provider. The presentations unveiled breakthrough innovations that challenge long-held assumptions about hard drive technology limits.

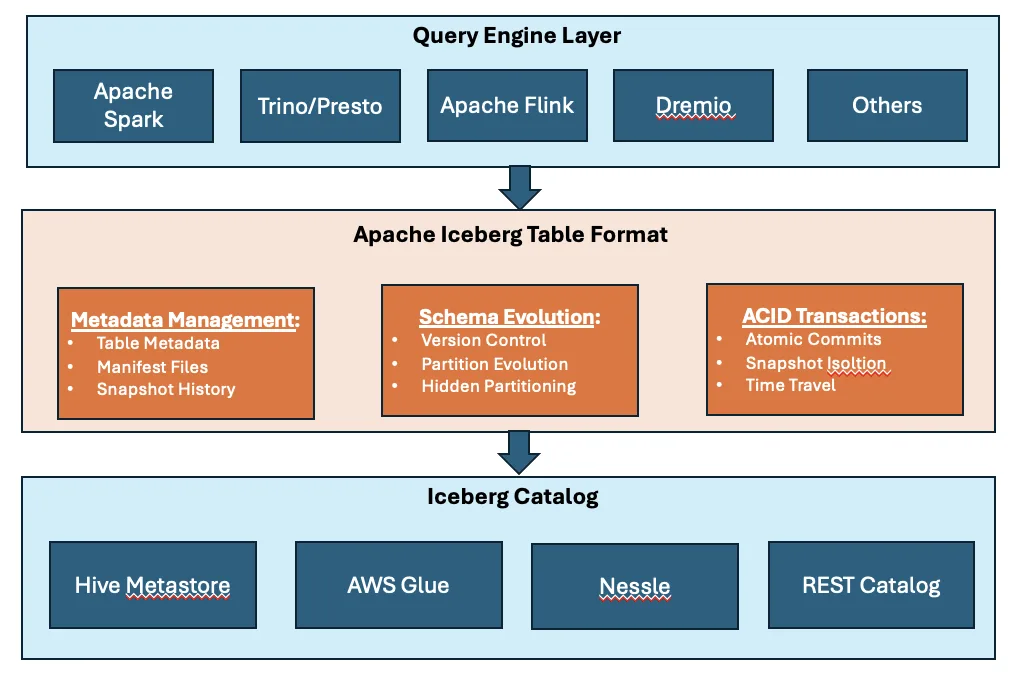

Explainer: What Does It Mean to “Support Apache Iceberg” in a Storage System

Over the past several years, “Apache Iceberg support” has quietly become table stakes in modern data infrastructure conversations. Storage vendors list it in press releases, lakehouse platforms lead with it and architects assume it.

Broadcom Heats the Wi‑Fi 8 Conversation

Qualcomm is signaling readiness through ecosystem validation, while Broadcom is sampling silicon to OEMs to kickstart hardware development.